Case Studies often hide integration problems until rollout

Case Studies can spotlight success, yet they often conceal integration risks that surface only at rollout. For teams evaluating laser cutting services, custom sheet metal fabrication, micro machining, cnc turning centers, additive manufacturing services, industrial 3d printing, and even energy analytics, this article shows how an Editorial Framework shaped by Industry Veterans helps uncover hidden compatibility, quality, and operational gaps before they become costly setbacks.

That gap matters across advanced manufacturing, green energy, smart electronics, healthcare technology, and supply chain SaaS, where a promising pilot can fail once it meets real production loads, legacy software, site conditions, or regulatory checks. Procurement teams may approve a supplier based on attractive sample results, while operators, quality managers, and project leaders later discover issues in tolerance drift, file interoperability, onboarding time, data mapping, or maintenance overhead.

For B2B buyers, the practical question is not whether a case study looks impressive. It is whether the solution can survive the first 30, 60, and 90 days after deployment. A disciplined review process helps technical evaluators and decision-makers test hidden assumptions before purchase orders are locked, installation windows are booked, and downstream teams inherit preventable risk.

Why rollout exposes problems that pilots and case studies rarely show

A case study is usually designed to show a successful outcome within a defined scope. That scope may include one material grade, one shift, one plant, or one software environment. Rollout is different. It adds multiple operators, changing ambient conditions, mixed part families, ERP or MES interfaces, and delivery commitments that often stretch from 2 weeks to 12 months.

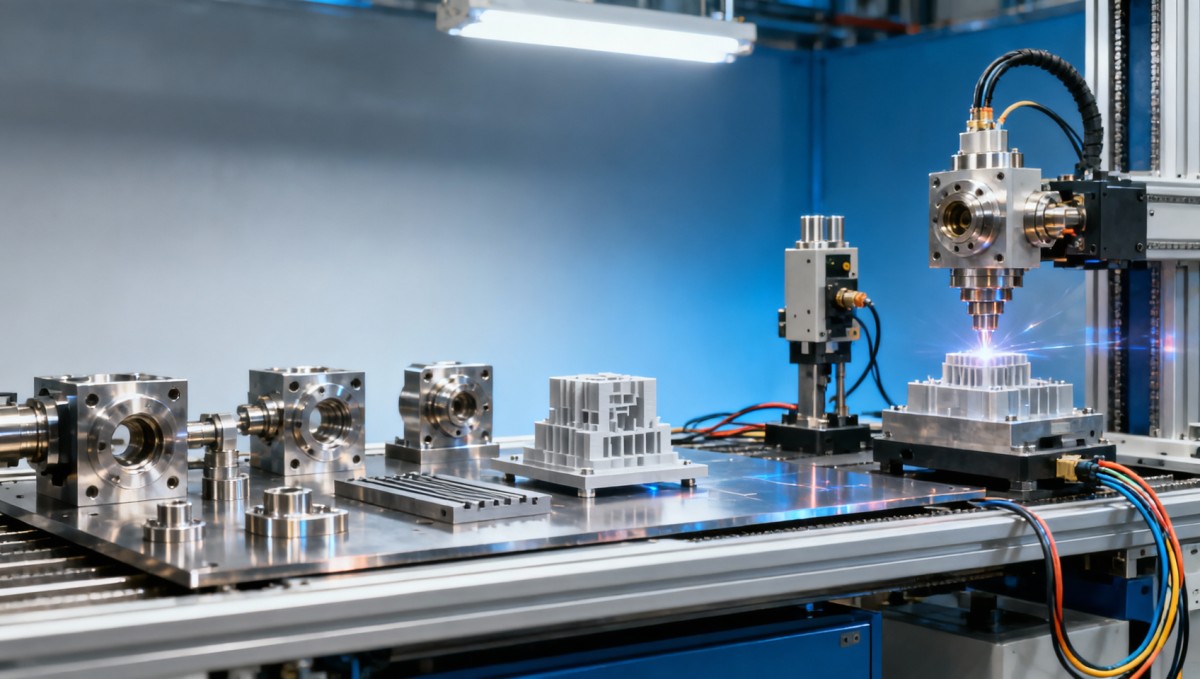

In laser cutting services or custom sheet metal fabrication, for example, sample parts may perform well on 1 or 2 materials, yet production schedules often require switching among stainless steel, aluminum, coated steel, and thicker gauges within the same week. Each change can affect cut edge quality, nesting efficiency, heat distortion, and post-processing time. What looked stable in a controlled case can become inconsistent under real throughput.

The same pattern appears in micro machining, cnc turning centers, additive manufacturing services, and industrial 3d printing. A supplier may demonstrate excellent dimensional performance on a prototype batch of 20 units, but rollout may require 500, 5,000, or more parts, across 3 sites and 2 quality systems. At that point, tool wear, fixture variation, powder handling discipline, machine calibration, and operator training begin to matter more than the original pilot story.

Energy analytics and supply chain SaaS projects also suffer from selective storytelling. Dashboards can look accurate in a sandbox, yet live deployment introduces API mismatches, delayed sensor feeds, user permission conflicts, and inconsistent master data. A project that seems 80% complete in a presentation may still carry 20% unresolved integration work that consumes the majority of rollout effort.

Common blind spots hidden by success narratives

- Limited test conditions, such as one product line, one region, or one software version.

- Missing cost elements, including changeover time, scrap during ramp-up, and revalidation effort.

- Unclear responsibility for data cleansing, operator training, fixture design, or post-installation support.

- No visibility into failure rates after week 4, week 8, or after the first preventive maintenance cycle.

The table below shows how pilot-stage claims often differ from rollout-stage reality across several industrial and digital environments.

The pattern is clear: rollout stress tests the full operating system, not just the product or service in isolation. Buyers that evaluate only headline outcomes may underestimate integration labor, acceptance criteria, and quality governance by 20% to 40% in the first phase.

An editorial framework that reveals hidden compatibility and quality gaps

A strong evaluation framework goes beyond asking whether a supplier has a compelling story. It asks how that story was constructed. For procurement directors, technical reviewers, and plant teams, the most useful case material explains the operating context, baseline constraints, measured inputs, exceptions, and what changed between pilot and scaled deployment.

This is especially important in sectors covered by TradeNexus Pro, where cross-functional decisions are common. An advanced manufacturing project may involve engineering, sourcing, QA, EHS, and finance. A healthcare technology deployment may add validation, documentation control, and traceability requirements. Green energy and smart electronics projects often combine hardware, firmware, and digital monitoring, multiplying the number of failure points.

An industry-veteran review lens typically looks at at least 4 layers: technical fit, process fit, system fit, and commercial fit. Technical fit checks tolerances, materials, throughput, or data accuracy. Process fit tests whether the operating team can execute consistently over multiple shifts. System fit examines compatibility with ERP, MES, PLM, SCADA, CRM, or asset management tools. Commercial fit reviews lead times, MOQ, support windows, and change-order exposure.

When those layers are documented, a case study becomes a decision aid rather than a marketing asset. Teams can compare “worked once” against “can work repeatedly under constraints.” That distinction reduces surprises during the first 6 to 12 weeks of rollout, when project drift, rework, and approval delays usually become visible.

What reviewers should demand from any industrial case evidence

- Defined production or usage volume, such as 100 parts per week, 2,000 transactions per day, or 24/7 monitoring.

- Material, tolerance, or data conditions clearly stated, including ranges like ±0.01 mm, 2 mm–10 mm sheet thickness, or 5-minute data intervals.

- Integration details disclosed, such as software versions, file formats, machine interfaces, and validation responsibilities.

- Ramp-up issues acknowledged, including scrap, retraining, downtime, or sensor recalibration during the first 30 days.

Minimum evidence set before supplier shortlisting

Before moving a vendor into final commercial discussion, teams should request 6 practical items: process flow, sample acceptance criteria, inspection method, escalation path, support response window, and a documented change-control procedure. These are basic controls, yet many rollout failures begin because one or more of them were assumed rather than confirmed.

For example, a fabrication supplier might promise a 7–10 day lead time, but if the drawing revision process adds 48 hours and first article inspection adds another 72 hours, the real delivery cycle may be closer to 14 days. Likewise, a SaaS deployment that appears ready in 3 weeks may actually need 2 additional weeks for data mapping, role permissions, and user acceptance testing.

Practical due diligence for procurement, engineering, and finance teams

Cross-functional due diligence works best when each stakeholder reviews the same opportunity through a different risk lens. Procurement focuses on continuity, lead time stability, pricing structure, and supplier responsiveness. Engineering checks capability, fit, and validation depth. Finance evaluates total cost over 12 to 36 months, not just the initial quote. Quality and safety teams verify whether the process can be controlled, audited, and repeated.

This matters when comparing services like additive manufacturing, cnc turning centers, and energy analytics platforms. A low quote may exclude setup hours, post-processing, data onboarding, or calibration. A high quote may include better traceability, stronger support coverage, and fewer implementation unknowns. The right decision often depends on how expensive disruption would be if rollout slips by 2 to 6 weeks.

A useful method is to score vendors against weighted criteria instead of relying on presentation quality. In many industrial buying processes, 5 to 7 criteria are enough to expose tradeoffs. Typical weightings might allocate 25% to technical capability, 20% to integration readiness, 20% to quality controls, 15% to commercial terms, 10% to support responsiveness, and 10% to scale-up flexibility.

The next table provides a decision structure that can be adapted for manufacturing services, digital tools, or hybrid industrial programs.

This kind of scorecard helps finance approvers and project managers compare risk-adjusted value instead of first-pass price. In many cases, the supplier with the lowest visible cost becomes the highest-cost option once expedited freight, rework, retraining, and delayed launch expenses are added.

Four questions that quickly test rollout credibility

- What changed between prototype success and full-volume operation, and how was it controlled?

- Which 3 failure modes appeared during the first 90 days, and what corrective action was taken?

- How long does onboarding really take when drawings, materials, users, or data sources increase by 2x or 3x?

- What internal resources must the buyer provide, in hours per week, during implementation?

Implementation planning: from supplier selection to stable operation

Even the best supplier choice can underperform if rollout planning is weak. Successful implementation usually moves through 5 stages: scope alignment, technical validation, integration testing, controlled ramp-up, and performance review. Skipping one of these stages often shifts effort downstream, where mistakes are more expensive and harder to correct.

For physical manufacturing services, controlled ramp-up may mean releasing 10 parts, then 50, then 200 before full-volume approval. For digital systems, it may involve one site, then one region, then enterprise-wide deployment. This staged approach gives teams a chance to measure actual scrap, downtime, alert accuracy, and user adoption before exposure grows.

Project leaders should also set clear ownership at every stage. If nobody owns drawing revision control, API mapping, PPAP-like approvals, or operator retraining, hidden issues stay unresolved until go-live. A simple responsibility matrix can prevent weeks of confusion, especially when external suppliers and internal teams work across different time zones.

In many B2B environments, the first 2 implementation metrics worth watching are time-to-stable-output and issue closure speed. Stable output may mean 3 consecutive compliant batches in fabrication, 14 days of reliable machine uptime, or 95% data completeness in analytics workflows. Without a measurable stabilization target, teams may declare success too early.

A practical rollout checklist

- Confirm technical baselines: materials, tolerances, file formats, sensor types, and operating windows.

- Define acceptance criteria: dimensional limits, defect thresholds, uptime target, report latency, or user access rules.

- Run integration tests: ERP/MES/API checks, machine interface tests, or controlled trial batches.

- Assign escalation roles: supplier contact, internal engineer, QA lead, project manager, and finance approver.

- Review after 30, 60, and 90 days: capture recurring defects, support response, and total cost movement.

Where rollout plans often fail

Failure usually does not start with a catastrophic technical issue. It starts with small mismatches: a file export format not recognized by a cnc turning center, a powder handling requirement not built into the work instruction, an energy meter naming convention that prevents clean dashboard aggregation, or a quality gate that exists on paper but not on the shop floor. Each issue may seem minor, but 5 or 6 unresolved gaps can combine into missed delivery or unreliable output.

That is why implementation plans should include both hard metrics and soft readiness indicators. Hard metrics include ppm, cycle time, lead time, or data refresh intervals. Soft readiness indicators include training completion, document control discipline, and how quickly users respond to deviations. Both matter during the first quarter after deployment.

Frequently asked questions from B2B buyers reviewing case-based claims

Buyers across procurement, engineering, quality, and finance often ask similar questions when a supplier presents a polished success story. The answers below help turn general claims into operationally useful decision inputs.

How can we tell whether a case study is relevant to our own operation?

Start with 5 filters: material or data type, production volume, tolerance or accuracy range, integration environment, and support model. If 3 or more of these do not match your environment, the case may be directionally interesting but not decision-grade. Relevance rises sharply when the supplier can show performance under similar load, similar documentation discipline, and similar approval cycles.

What lead time should we expect before integration risks become visible?

Many issues appear within the first 2 to 6 weeks, but some only surface after the first maintenance cycle, shift expansion, or data source increase. In fabrication, this may occur when batch volume passes 200 to 500 units. In SaaS or analytics, it often happens after more users, more assets, or more exception alerts are added to the system.

Which metrics matter most during the first 90 days?

Focus on 4 groups: output quality, schedule adherence, support responsiveness, and change-control discipline. For manufacturing services, useful indicators include defect rate, rework hours, on-time delivery, and first-pass yield. For digital platforms, track data completeness, user login activity, alert precision, and issue resolution time. These metrics reveal whether the solution is truly integrating into daily operations.

Should finance teams evaluate more than the purchase price?

Yes. Total cost should include onboarding labor, validation effort, process disruption, retraining, engineering changes, support tickets, and risk of delayed launch. A 10% lower purchase price can be erased quickly if rollout creates one week of downtime, one missed shipment window, or repeated inspection and rework cycles.

Case studies are useful starting points, but they are not substitutes for integration planning, quality verification, and cross-functional due diligence. The strongest B2B decisions come from asking how a solution performs beyond the pilot, across real workloads, mixed environments, and measurable acceptance criteria.

For teams evaluating manufacturing services, industrial 3d printing, cnc operations, energy analytics, or connected supply chain solutions, a more rigorous review process can reduce rollout surprises, protect budget, and improve long-term operational fit. If you need a clearer way to assess supplier claims, compare implementation risk, or build a shortlist grounded in practical evidence, contact TradeNexus Pro to get a tailored evaluation framework and explore more solution-focused market intelligence.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.