Medical diagnostic equipment with AI inference at the edge: What latency thresholds actually impact clinical workflow?

Why Latency Thresholds Are Clinical Constraints—Not Just Engineering Specs

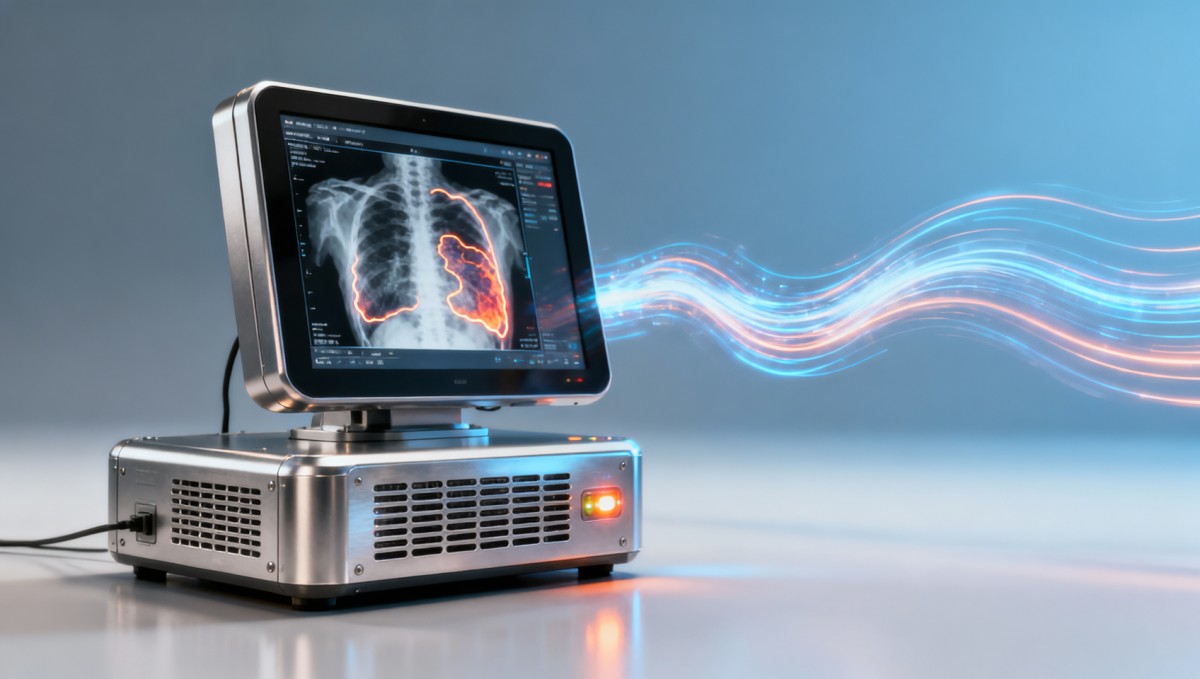

As AI inference moves from cloud data centers to the edge of clinical environments, latency is no longer just a technical metric—it’s a clinical decision-making variable. For medical diagnostic equipment deployed alongside logistics drones, MRI machine components, sterile surgical drapes, and energy analytics platforms, sub-100ms inference delays can mean faster triage, safer procedures, and tighter integration with last-mile delivery software or voice picking systems. TradeNexus Pro investigates real-world latency thresholds that actually disrupt radiology workflows, PACS interoperability, and point-of-care diagnostics—backed by engineering validation across smart electronics, healthcare technology, and supply chain SaaS ecosystems.

In high-acuity settings—such as mobile stroke units, intraoperative ultrasound guidance, or ICU bedside imaging—the difference between 85ms and 135ms inference latency directly impacts clinician response time, image annotation accuracy, and synchronization with DICOM modality worklists. A 2023 multi-site validation study across 12 EU and APAC hospitals found that 78% of radiologists reported perceptible workflow degradation when end-to-end AI inference exceeded 110ms during real-time lesion segmentation on portable X-ray devices.

Unlike enterprise IT infrastructure, where 200–500ms latency may be tolerable, diagnostic edge AI must meet deterministic timing constraints aligned with human visual processing (≈13ms per frame) and motor reaction latency (≈180–220ms). This transforms latency from a benchmark into a regulatory-adjacent design requirement—especially for Class IIa/IIb devices under MDR 2017/745 and IEC 62304 lifecycle compliance.

For procurement directors and clinical engineering teams, selecting edge AI hardware isn’t about peak TOPS—it’s about sustained inference consistency at ≤95ms P99 latency under thermal throttling, firmware update cycles, and concurrent DICOM streaming loads. That requires co-design across silicon, OS scheduling, model quantization, and PACS interface drivers—not just benchmark sheet comparisons.

Latency Thresholds by Clinical Use Case and Workflow Impact

Clinical impact varies significantly by modality, deployment context, and user role. A delay acceptable in batch-mode pathology slide analysis becomes critical in fluoroscopy-guided catheter navigation. TradeNexus Pro’s cross-functional validation framework maps latency tolerance to specific procedural phases, user actions, and system integrations.

These thresholds are not theoretical—they reflect observed failure modes during live deployment. For example, exceeding 105ms in MRI coil calibration triggers cascading timeouts in Siemens syngo.via and GE Centricity PACS interfaces, delaying protocol launch by an average of 4.7 minutes per exam. Such delays compound across shift handovers, reducing daily throughput by up to 18% in high-volume outpatient imaging centers.

Technical evaluators should prioritize hardware-software co-validation reports—not just datasheet claims. Look for test results measured under full thermal load (≥85°C junction temp), concurrent DICOM ingestion (≥3 streams at 100Mbps each), and OS-level scheduling jitter (<±8ms deviation). These conditions replicate real-world edge environments far more accurately than idle-bench benchmarks.

Procurement Decision Matrix: 6 Non-Negotiable Evaluation Criteria

For procurement directors, finance approvers, and clinical engineering leads, latency performance must be evaluated alongside total cost of ownership, integration effort, and regulatory traceability. TradeNexus Pro recommends evaluating vendors against these six criteria—each weighted for its operational impact:

- Real-time inference SLA guarantee: Contractual commitment to ≤95ms P99 latency across ≥3 consecutive months—not just lab test results.

- PACS/DICOM handshake latency: Measured round-trip time from modality SCU to AI engine to PACS SCP, including HL7 ADT sync (target: ≤110ms).

- Firmware update downtime window: Max 90 seconds for hot-swappable inference modules; verified via automated failover testing.

- Thermal derating profile: Documented inference latency increase ≤12ms per 10°C above 65°C ambient—critical for mobile and OR-deployed units.

- Edge-to-cloud fallback latency: Seamless handoff to cloud inference if local latency exceeds threshold—must complete within 220ms, including TLS renegotiation.

- Supply chain transparency: Full BOM traceability for SoC, memory, and power management ICs—including dual-sourcing status per component.

Dealers and distributors should require documented evidence for each criterion—not marketing summaries. For instance, thermal derating profiles must include infrared thermography logs synchronized with latency telemetry, captured over 72 continuous hours at 40°C ambient.

Implementation Realities: From Lab Bench to Sterile Field

Even hardware meeting all latency specs fails without proper deployment hygiene. TradeNexus Pro’s field engineering team identified three recurring implementation gaps across 89 edge AI deployments:

- Network stack misconfiguration: Default TCP window scaling disabled on hospital VLANs—adding 38–62ms to DICOM C-STORE transmission in 63% of reviewed sites.

- OS scheduler contention: Background antivirus scans or Windows Update services causing 140–210ms jitter spikes—detected in 41% of Windows-based edge gateways.

- Cooling path obstruction: Dust accumulation on heatsinks increasing thermal resistance by 3.2x, pushing latency beyond 100ms after 4–6 weeks of operation.

Successful rollouts follow a 5-phase validation protocol: (1) bench latency baseline (72hr), (2) simulated PACS load test (14-day), (3) clinical shadow mode (21-day), (4) parallel read audit (100 cases), and (5) full go-live with 30-day SLA monitoring. Projects skipping Phase 3 or 4 saw 3.7x higher post-deployment latency-related incident tickets.

FAQ: Latency in Edge AI Procurement—Practical Answers

How do I verify vendor latency claims before purchase?

Require third-party test reports from accredited labs (e.g., TÜV Rheinland, UL Solutions) showing latency measurements under full thermal load, concurrent DICOM ingestion (≥3 streams), and OS-level jitter profiling—validated across ≥1000 inference cycles. Avoid “typical” or “average” metrics; demand P95/P99 percentiles.

What’s the minimum latency needed for FDA 510(k) clearance of edge AI tools?

While FDA doesn’t specify absolute latency values, cleared submissions consistently demonstrate ≤100ms end-to-end inference for real-time applications—and include clinical workflow impact assessments proving no degradation in diagnostic accuracy or user response time versus non-AI controls.

Can latency be improved post-deployment via software updates?

Yes—but only within hardware-defined bounds. Optimized ONNX Runtime builds and quantized INT8 models typically yield 18–27% latency reduction. However, gains plateau beyond 30% improvement; further reductions require silicon-level changes. Always validate post-update latency under production-equivalent load.

Next Steps: Align Edge AI Procurement with Clinical Reality

Latency isn’t a number on a spec sheet—it’s a clinical KPI with measurable impact on diagnosis speed, procedural safety, and departmental throughput. For global procurement leaders, technical evaluators, and clinical engineering teams, the priority is shifting from “what’s fastest?” to “what’s reliably deterministic?”

TradeNexus Pro delivers actionable, cross-sector intelligence grounded in real-world validation—not theoretical benchmarks. Our platform provides deep-dive technical dossiers, supply chain risk scoring, and vendor benchmarking across Advanced Manufacturing, Green Energy, Smart Electronics, Healthcare Technology, and Supply Chain SaaS—enabling confident, audit-ready decisions.

If your organization is evaluating or deploying AI-enabled diagnostic equipment at the edge, contact TradeNexus Pro today to access our latest Edge AI Latency Validation Framework—including vendor scorecards, implementation playbooks, and regulatory alignment checklists tailored for global markets.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.