What Causes False Alarms in Predictive Maintenance Sensors?

False alarms in predictive maintenance sensors can frustrate operators, disrupt workflows, and weaken trust in monitoring systems. From poor calibration and signal noise to environmental interference and inconsistent data interpretation, several factors can trigger misleading alerts. Understanding these causes is the first step toward improving sensor accuracy, reducing unnecessary downtime, and helping teams make faster, more confident maintenance decisions.

Why operators should use a checklist first, not guesswork

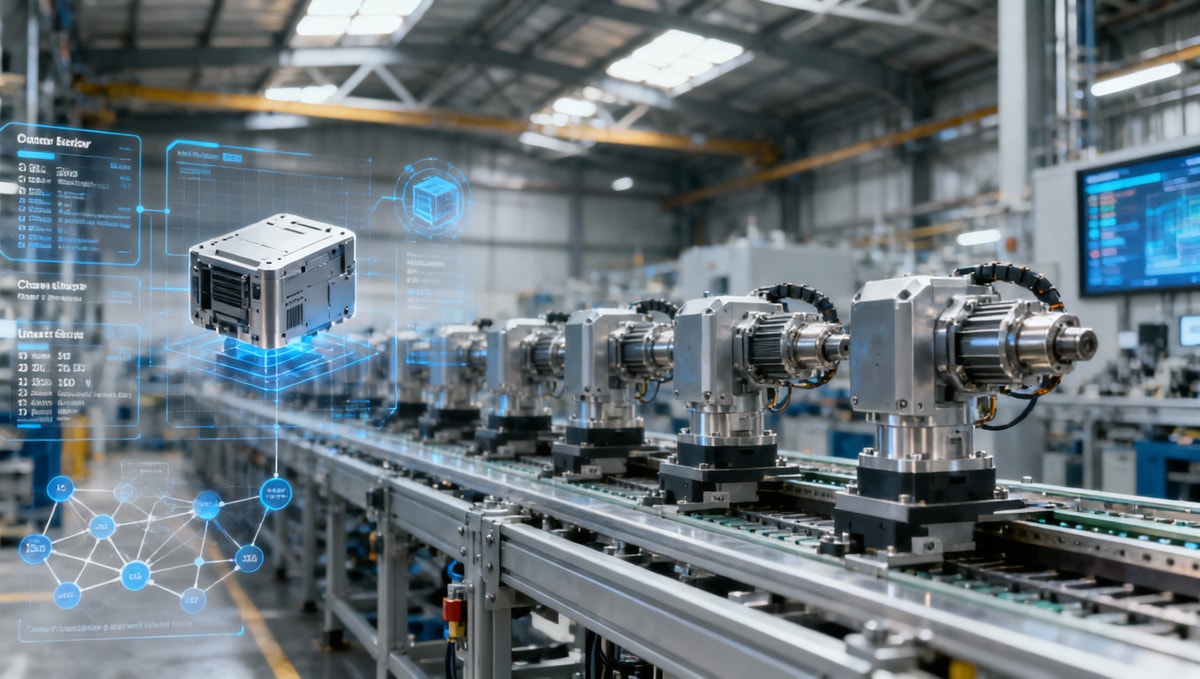

When predictive maintenance sensors start generating repeated warnings, the fastest mistake is to assume the machine is failing. In practice, many alerts are caused by setup gaps, unstable inputs, or threshold logic that does not fit the equipment’s real operating profile. For operators in manufacturing lines, energy systems, smart electronics production, healthcare equipment rooms, or warehouse automation, a checklist-based approach shortens diagnosis time from hours to minutes.

A useful rule is to separate the problem into 3 layers: sensor health, signal quality, and interpretation logic. If teams skip this order, they often replace components too early, stop a line unnecessarily, or escalate a minor drift into a maintenance event. In mixed industrial environments, even a 5% to 10% false alert rate can create serious hesitation, because operators begin to treat every alarm as “probably wrong.”

This is why predictive maintenance sensors need routine validation, not only installation. A sensor that performed well during commissioning may behave differently after 30, 90, or 180 days as temperature, vibration, dust load, or machine duty cycle changes. The goal is not to eliminate every alarm, but to make sure each alert has enough signal credibility to support action.

The first items to verify before treating an alert as real

- Confirm whether the sensor was calibrated within the planned service window, such as every 6 or 12 months depending on criticality.

- Check whether the machine was running in its normal load band when the alert occurred, not during startup, shutdown, cleaning, or changeover.

- Verify if recent environmental shifts occurred, including humidity spikes, electrical interference, washdown cycles, or ambient heat above the expected range.

- Review whether alert thresholds were inherited from another asset rather than tuned to this specific machine.

- Compare the suspicious signal against at least 1 secondary indicator such as motor current, temperature trend, pressure pattern, or vibration spectrum.

This sequence matters because operators often see the alarm first, while maintenance or engineering sees the context later. A structured first-pass review reduces unnecessary shutdowns and supports better communication across shifts. It also helps teams document recurring false positives in a way that can be shared with system integrators, sensor suppliers, or analytics providers.

Core checklist: the most common causes of false alarms in predictive maintenance sensors

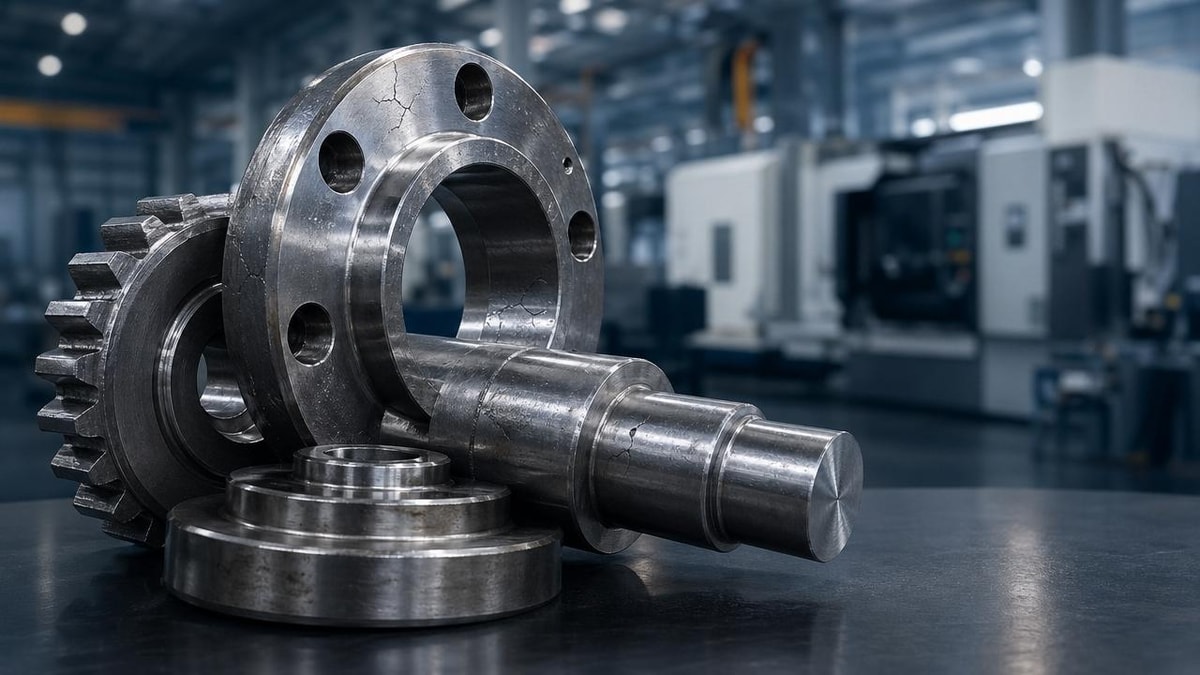

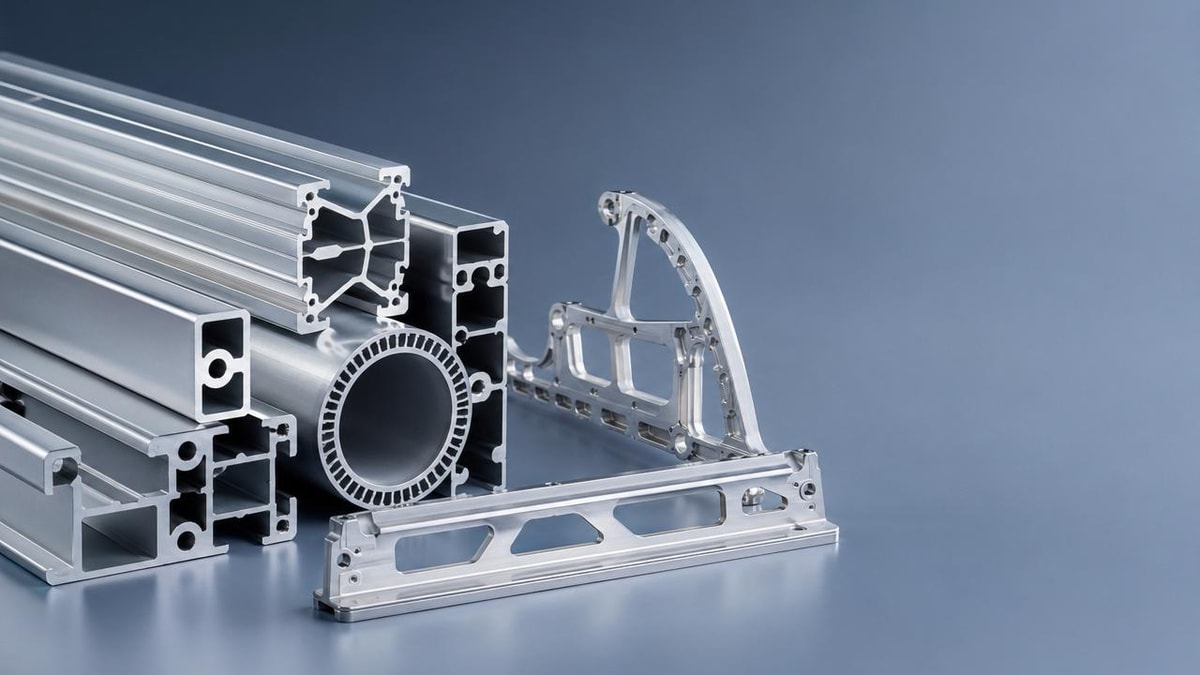

Most false alarms in predictive maintenance sensors come from a limited number of repeatable causes. Operators do not need to investigate every possibility at once. Start with the highest-probability checks: calibration quality, signal contamination, sensor placement, data timing, and threshold design. These five areas account for a large share of field-level alert confusion across rotating equipment, HVAC assets, conveyors, pumps, robotic cells, and digitalized utility systems.

The table below can be used as a practical inspection guide during an alarm review. It is especially useful for frontline teams who need to decide whether to continue running, inspect locally, or escalate for deeper analysis.

A key lesson from this checklist is that false alarms often reflect system mismatch rather than sensor failure. If one vibration node alerts during every batch transition, for example, the issue may be sampling logic or threshold sensitivity, not bearing damage. For many predictive maintenance sensors, alert quality improves significantly once teams review at least 2 to 4 weeks of baseline operating data instead of relying on day-one assumptions.

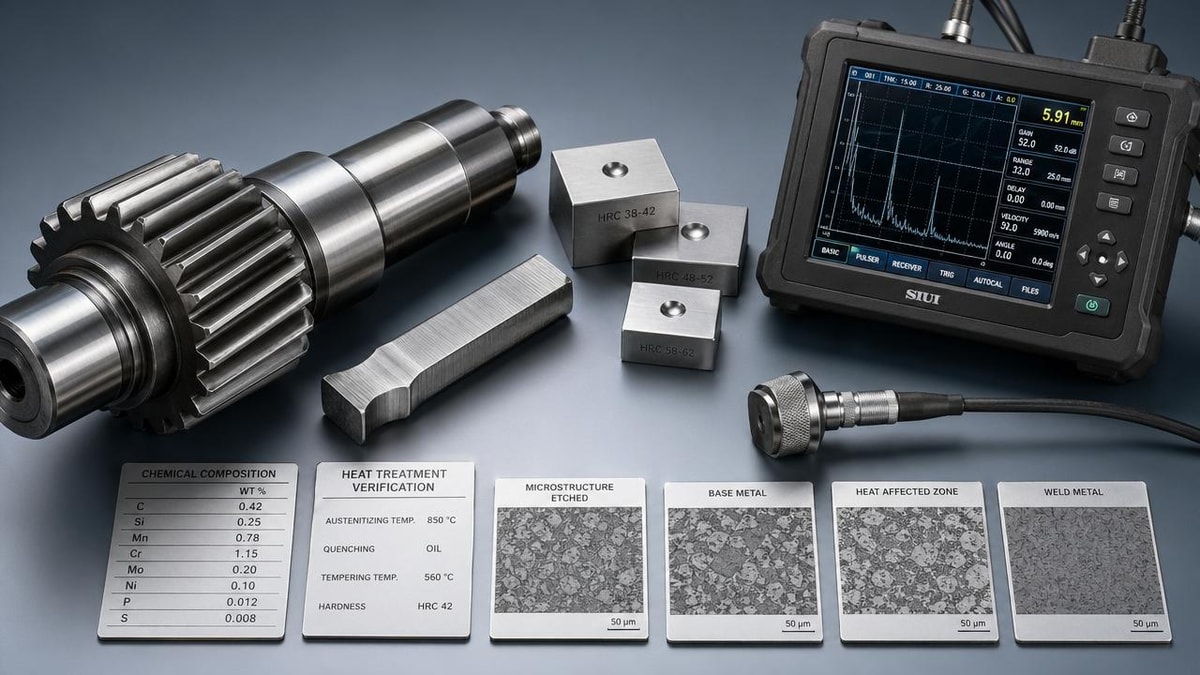

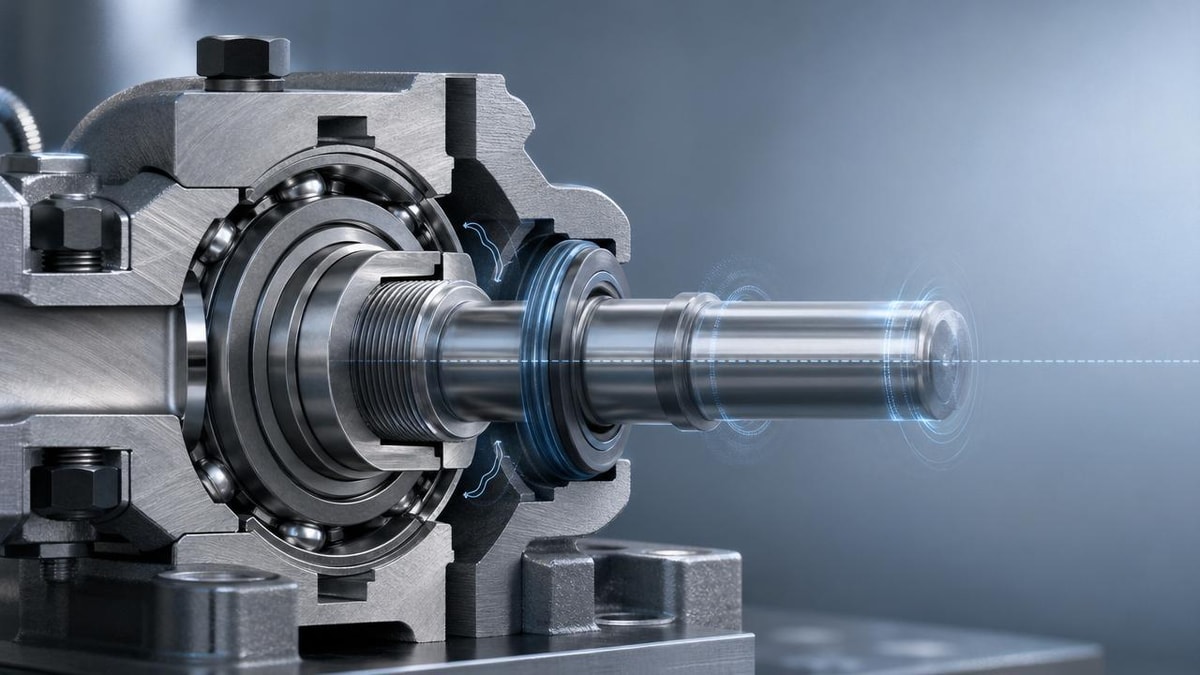

Calibration and drift checks

Calibration problems are among the easiest issues to overlook because the sensor continues to transmit data, making it appear healthy. Yet a small offset in a temperature, pressure, or vibration sensor can push readings across a narrow alert boundary. If a device has not been validated within the manufacturer’s recommended interval or after a major shock event, the alarm may reflect drift rather than actual machine stress.

Operators should also note whether calibration was performed under conditions similar to actual use. A sensor set in a clean room may behave differently once installed near oil mist, abrasive dust, or fluctuating voltage. In facilities with multiple lines, replacing one sensor with a nominally similar unit can still introduce deviation if sensitivity, mounting torque, or firmware revision differs.

As a practical standard, compare suspect readings with one independent reference before opening a maintenance work order. Even a quick side-by-side check over 15 to 30 minutes can reveal whether the signal is truly drifting or just crossing an aggressive warning threshold.

Noise, interference, and data quality checks

Electrical noise is a major source of misleading alerts, especially where variable frequency drives, switching power supplies, conveyors, servo motors, or wireless gateways operate close together. Predictive maintenance sensors may detect spikes that are unrelated to machine condition but still large enough to trigger event logic. This is common when cable shielding is poor, grounding is inconsistent, or analog wiring runs too close to high-power lines.

Data quality issues are not limited to electricity. Missing samples, time stamp mismatch, low battery in wireless nodes, and unstable network transmission can all distort trend interpretation. If the analytics layer receives irregular data every 20 to 40 seconds instead of the expected interval, the system may create false patterns or misread temporary transients as failures.

Operators should pay attention to signal shape, not just signal value. One isolated spike is often less meaningful than a stable trend over 8, 24, or 72 hours.

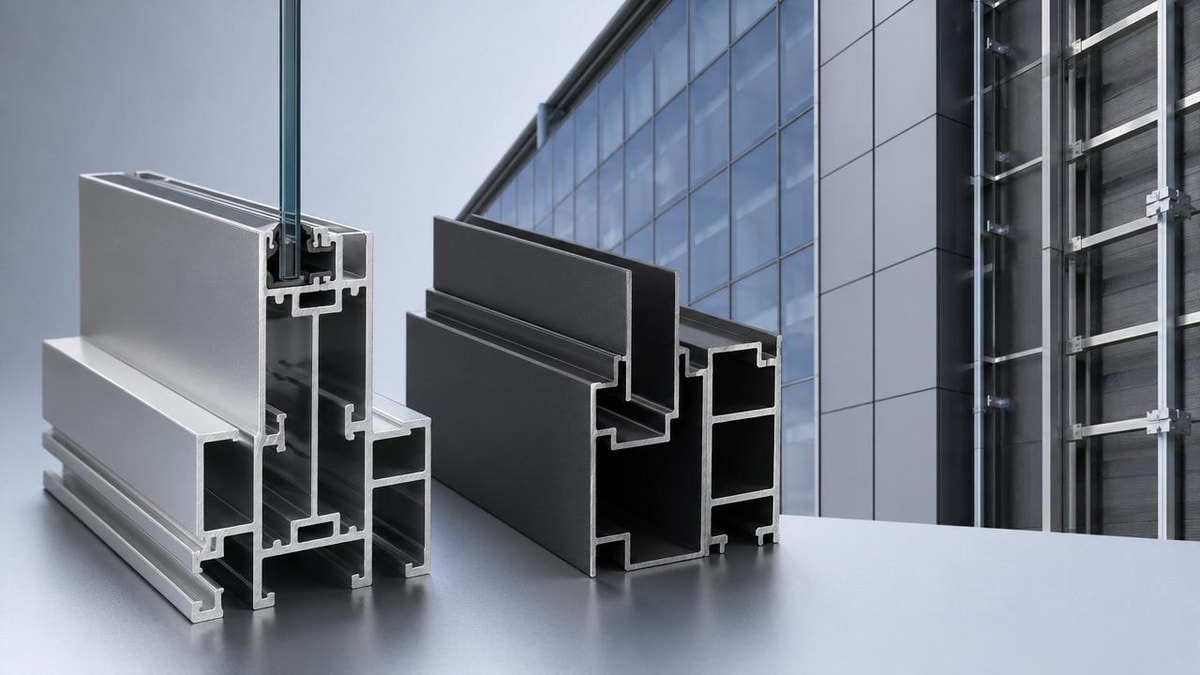

How operating conditions change alarm behavior across different environments

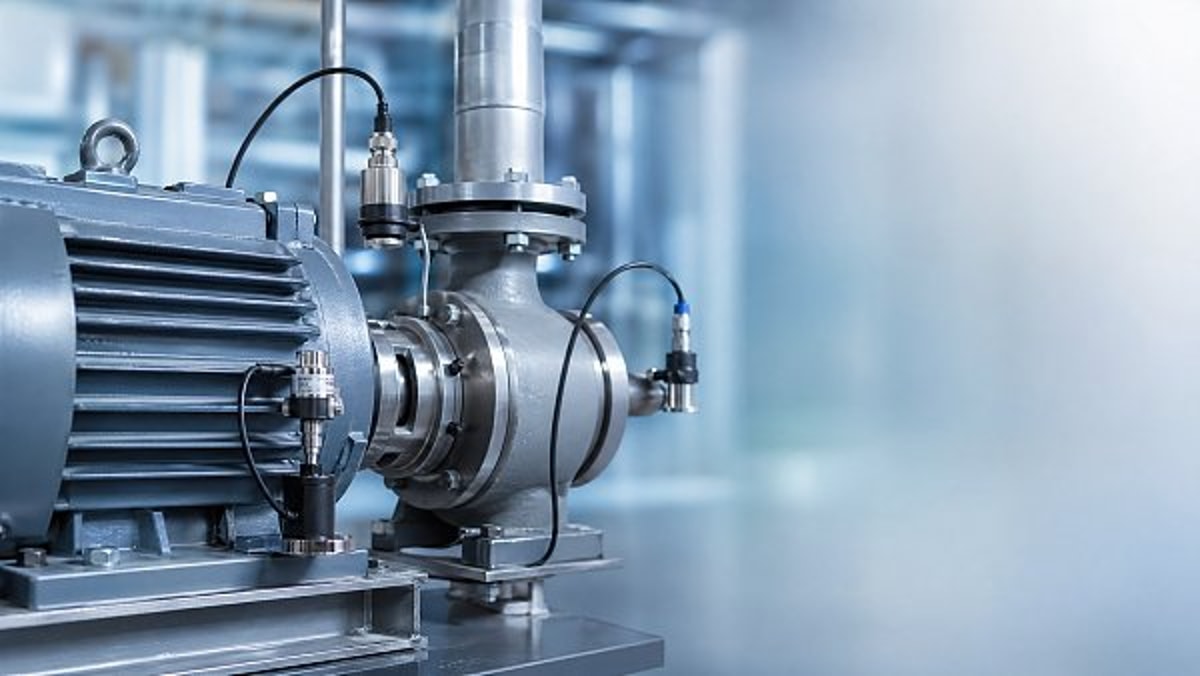

Not all false alarms come from poor hardware or weak analytics. Sometimes the sensor is reporting accurately, but the system interprets a normal process change as a fault. This is especially common when one monitoring template is copied across different sectors. A pump in a green energy utility loop does not behave like a robotic arm in smart electronics assembly, and neither follows the same thermal pattern as medical support equipment or warehouse sortation motors.

For operators, this means alarm credibility must be judged against actual duty cycle. A machine that starts 60 times per shift will produce very different signatures from one that runs continuously for 12 hours. Predictive maintenance sensors need context-aware thresholds, mode recognition, or segmented baselines to avoid flagging normal transitions as abnormal events.

The table below shows common operating scenarios where otherwise healthy systems can trigger false alerts. It helps teams decide whether the next action should be process review, sensor adjustment, or mechanical inspection.

This kind of scenario mapping is especially important in cross-functional operations. A supply chain facility may run assets at 40% capacity one week and 90% the next. If predictive maintenance sensors are configured around only one operating state, false alarms become almost inevitable. Operators should therefore log the machine state, product type, and shift condition whenever a recurring alert appears.

Environment-specific checks operators often miss

Advanced manufacturing and smart electronics

High-speed equipment often creates brief but legitimate dynamic peaks. If sensors sample too slowly or analytics aggregate data too aggressively, the system may misclassify these normal peaks. Check sampling rate, mounting rigidity, and whether machine mode changes are labeled in the data history.

Green energy and utilities

Outdoor assets face broader humidity, wind, and temperature ranges. A threshold that works at 20°C may be too narrow at 35°C or below freezing. Review enclosure integrity, sensor ingress protection, and whether weather-driven variation was included in the baseline window.

Healthcare technology and controlled facilities

Sensitive equipment rooms can generate false alarms after sanitation cycles, air balancing changes, or temporary relocation of nearby devices. If alerts appear after maintenance on HVAC, clean air systems, or backup power, treat environmental coupling as a primary check before assuming equipment failure.

Risk reminders: overlooked factors that weaken trust in predictive maintenance sensors

Trust is operational capital. Once teams believe alarms are unreliable, they delay response, override notifications, or create informal workarounds. That is why false alarms in predictive maintenance sensors should be treated as a process risk, not just a technical annoyance. In many facilities, the bigger loss comes from response fatigue rather than the individual alert itself.

Several overlooked factors tend to drive this loss of confidence. They usually sit between departments: maintenance assumes controls is watching data quality, controls assumes operations will report unusual process conditions, and operations assumes the vendor’s default settings are already optimized. Without clear ownership, low-grade false positives can continue for weeks.

Operators can reduce that risk by documenting each suspicious alert with 4 pieces of information: time of event, machine state, visible asset condition, and whether another signal confirmed it. This simple habit creates a reliable review trail over 2 to 6 weeks and helps distinguish random noise from pattern-based failure indicators.

Commonly ignored warning signs

- A sensor that alarms only after washdown, cleaning, or compressed-air blowoff may be reacting to moisture or transient vibration, not asset degradation.

- A temperature alert that appears after software updates may reflect new scaling logic or unit conversion issues rather than thermal stress.

- A wireless node with declining battery voltage may still communicate, but with enough instability to distort trend continuity.

- A newly replaced motor, bearing, fan, or coupling may have a normal signature different from the previous component, which can confuse historical baselines.

- Thresholds copied from similar equipment can still fail if speed, mounting surface, product load, or duty cycle differs by even 10% to 20%.

In practice, predictive maintenance sensors work best when the alarm model is reviewed after major process changes. That includes new product introduction, line balancing, firmware updates, maintenance overhaul, or layout changes affecting vibration and electromagnetic conditions. Any of these can alter signal interpretation even if the sensor itself is functioning correctly.

Action plan: what operators should do next when false alarms keep happening

If false alarms continue, the best response is not to disable predictive maintenance sensors, but to tighten the review process around them. A good action plan should define who checks what, in what order, and within what time frame. For many sites, a 24-hour triage path for repeated alerts and a 7-day corrective review are realistic starting points.

The most effective improvement plans combine frontline observations with engineering adjustments. Operators know when the process is behaving unusually, while technicians can verify wiring, calibration, and mounting. Data specialists or integrators then refine thresholds, filtering, and event logic based on actual operating evidence.

Use the checklist below to organize the response when an alert pattern seems unreliable.

- Record the alarm time, asset state, shift condition, and current production mode.

- Inspect the sensor physically for looseness, contamination, cable strain, or connector damage.

- Compare the reading with at least one secondary measurement or process variable.

- Review whether the alert occurred during startup, shutdown, cleaning, maintenance, or changeover.

- Check the last calibration, battery status, firmware revision, and communications quality.

- Escalate for threshold or algorithm review if the same false pattern repeats 3 or more times in a similar operating state.

What to prepare before discussing optimization or replacement

If your team needs outside support, prepare practical information rather than general complaints. Share the sensor type, mounting location, measured variable, normal operating range, alert threshold, and known environmental conditions. Include at least 2 weeks of trend history if available, plus notes on whether the issue happens continuously or only in specific cycles.

For procurement and operations teams working across multiple sites, it also helps to identify whether the issue is local or systemic. If one model of predictive maintenance sensors behaves differently across 5 similar assets, the problem may be installation quality. If all sites show the same false logic after a software update, the issue may sit in analytics configuration.

At this stage, the right next step could be recalibration, filtering changes, threshold segmentation, enclosure upgrades, or a different sensing method altogether. The best decision depends on operating mode, criticality, data interval, and acceptable alarm frequency.

Contact us for sensor review, parameter checks, and fit-for-use guidance

If your team is dealing with unreliable predictive maintenance sensors, TradeNexus Pro can help you move from repeated alarm frustration to a clearer evaluation path. We support global industrial buyers, operators, and decision-makers with focused insight across advanced manufacturing, green energy, smart electronics, healthcare technology, and supply chain SaaS environments.

You can contact us to discuss practical topics such as parameter confirmation, sensor selection, threshold review, deployment fit, delivery lead time, integration planning, sample support, and quotation communication. If you are comparing vendors or trying to understand whether the issue is in the sensor, the environment, or the monitoring logic, we can help structure that review.

For faster support, prepare your asset type, sensing method, installation conditions, alert frequency, and any recent process changes. With that information, the conversation becomes more productive and the path to reducing false alarms in predictive maintenance sensors becomes much shorter.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.