Digital Twin Manufacturing Without Reliable Data Is a Risk

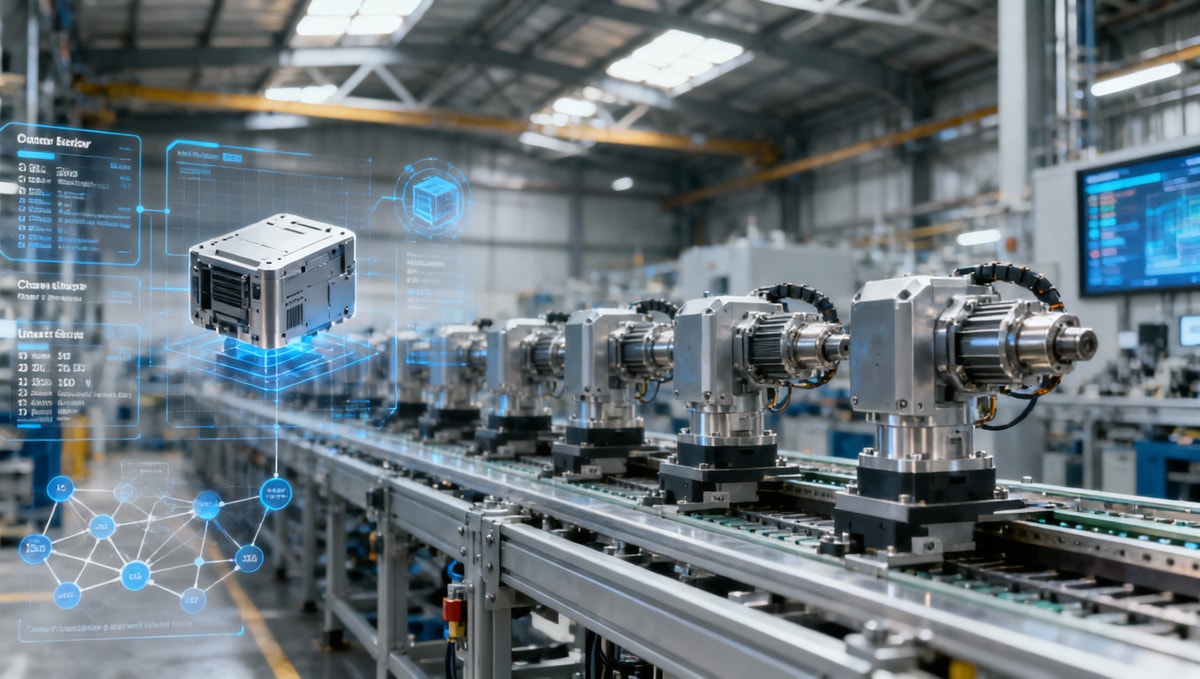

Digital twin manufacturing promises sharper visibility, faster decisions, and stronger operational control, but without reliable data, it can amplify errors instead of reducing risk. For enterprise decision-makers, the real challenge is not adoption alone—it is building trustworthy data foundations that turn digital models into strategic assets rather than costly blind spots.

Why the market is shifting from digital twin ambition to data trust

Across advanced manufacturing, smart electronics, healthcare technology, green energy equipment, and logistics-linked production networks, the conversation around digital twin manufacturing has changed over the last 24 to 36 months. Leaders are no longer asking whether digital twins matter. They are asking whether the underlying data is reliable enough to support production planning, predictive maintenance, quality control, and supplier coordination without creating a second layer of operational risk.

This shift is not theoretical. Many enterprises completed pilot programs in a single line, single plant, or single asset environment and saw promising early gains. Yet once they tried to scale from 1 production cell to 5 plants, or from one machine class to 20 equipment types, data inconsistency became more visible. Tag naming drift, sensor calibration gaps, delayed ERP updates, and incomplete maintenance records often made the digital model look precise while masking weak assumptions.

For decision-makers, that is the strategic warning sign. A digital representation can only be as useful as the quality, timeliness, and governance of the data feeding it. In practical terms, if telemetry refreshes every 5 seconds but work-order data lags by 24 hours, the twin may support monitoring but fail at root-cause analysis or production scheduling. That disconnect is now one of the defining business issues in digital twin manufacturing.

The strongest signals enterprise leaders should notice

The first signal is that investment priorities are moving upstream. Instead of focusing only on visualization layers, more companies are allocating budget to data integration, industrial connectivity, master data cleanup, and governance workflows. In many programs, 40% to 60% of implementation effort now sits in data preparation rather than interface design. That is a sign of maturity, not slowdown.

The second signal is broader executive involvement. Digital twin manufacturing is no longer owned solely by plant engineering or IT architecture. Procurement leaders, supply chain managers, quality heads, and operations directors increasingly need to validate whether the model reflects actual purchasing lead times, material substitutions, supplier variability, and compliance-related process constraints.

The third signal is a rising emphasis on operational confidence. A twin that helps reduce unplanned downtime by even 5% to 10% can justify investment, but a twin that misrepresents cycle time variability or energy consumption can distort production decisions. As a result, organizations are shifting from “Can we build it?” to “Can we trust it at scale?”

Typical change pattern in enterprise adoption

The trend below captures how digital twin manufacturing programs often evolve as organizations move from early enthusiasm to disciplined scaling.

What matters in this progression is that risk grows with scale. A local data issue may only affect one dashboard in the pilot stage, but in a scaled environment it can affect inventory positioning, throughput assumptions, maintenance timing, and customer delivery commitments. That is why digital twin manufacturing is increasingly being evaluated as a data discipline as much as a software initiative.

What is driving the risk gap in digital twin manufacturing

Several forces are widening the gap between digital twin expectations and operational reality. The first is system fragmentation. Many enterprises still run production, quality, maintenance, procurement, and logistics data across separate platforms with different update frequencies. A machine may report condition data continuously, while supplier lead time assumptions in the planning system are updated weekly or even monthly. The twin appears unified, but the source logic is not.

The second driver is the rise of multi-variable manufacturing environments. In sectors such as battery systems, electronics assemblies, medtech components, and precision industrial products, product variants have increased significantly. A plant that used to manage 50 to 80 active SKUs may now handle 200 or more with shorter production windows. That makes digital twin manufacturing more valuable, but also more dependent on accurate routing data, bill-of-material versions, and change management controls.

The third driver is the pressure for faster response. Procurement disruptions, energy volatility, labor shortages, and compliance demands force teams to make decisions in days rather than weeks. A digital twin can support rapid scenario testing, but if the source data is 10% incomplete or key values are manually overridden without traceability, scenario outputs may look analytical while remaining operationally fragile.

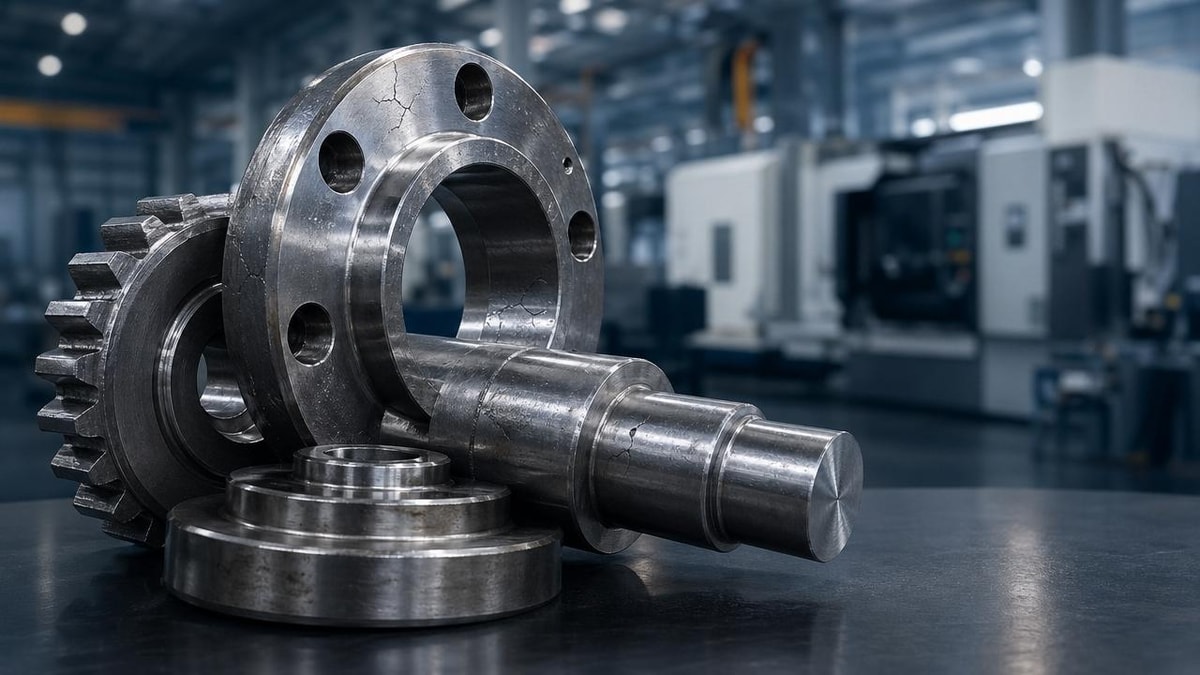

Core causes behind unreliable models

In practice, unreliable digital twin manufacturing environments usually do not fail because of one catastrophic flaw. They weaken through small, cumulative mismatches. Sensor drift, inconsistent asset IDs, outdated machine baselines, undocumented process changes, and missing downtime codes each seem manageable on their own. Together, they can undermine model accuracy across a 12-month planning cycle.

Another common cause is unclear data ownership. Operations teams may assume IT owns integration quality. IT may assume engineering validates machine semantics. Procurement may assume supplier lead time logic sits with planning. When ownership is blurred across 4 to 6 functional teams, the twin has no reliable mechanism for correction, validation, or escalation.

A third cause is uneven governance between physical and digital change. Plants often have disciplined procedures for changing tooling, layouts, or quality checkpoints, but weaker controls for updating digital attributes in the corresponding model. The physical process changes on Monday; the twin reflects it two weeks later. During that gap, analysis quality drops even if dashboards still look stable.

Key drivers and their enterprise impact

The following table summarizes the most common forces increasing risk in digital twin manufacturing and what leaders should expect them to affect first.

For enterprise leaders, the lesson is direct: the future of digital twin manufacturing will be shaped less by visual sophistication and more by the strength of data architecture, governance cadence, and cross-functional operating discipline. Organizations that recognize this early are more likely to convert pilots into enterprise value.

Who feels the impact first when data reliability is weak

The effects of poor data quality do not land evenly across the business. Operations teams often feel the earliest friction because they rely on digital twin manufacturing outputs to evaluate downtime patterns, throughput constraints, and maintenance windows. If the model does not match line reality within a reasonable tolerance, frontline trust can drop in less than one quarter.

Procurement and supply chain leaders feel the second-order impact. A digital twin connected to production planning can support smarter sourcing decisions, especially when lead times fluctuate across 2 to 8 weeks. But if supplier inputs, material substitutions, or inbound timing assumptions are incomplete, the twin may overstate resilience. That can lead to optimistic production commitments, avoidable expediting costs, or excess safety stock.

Quality and compliance functions also face elevated exposure, particularly in sectors where traceability and process consistency matter. If process deviations, environmental conditions, or inspection outcomes are not synchronized correctly, digital twin manufacturing may fail to support investigations or preventive actions. In regulated or high-spec environments, that weakens both decision speed and audit readiness.

Impact by role and business function

Decision-makers should treat impact mapping as an early-stage governance step, not a post-implementation review. The same data issue can create different types of damage depending on who depends on it and how often they use it.

- Plant operations may see distorted bottleneck analysis, especially when cycle time assumptions are not refreshed every shift or every day.

- Maintenance leaders may mis-prioritize interventions if failure signatures are based on incomplete runtime or condition history.

- Procurement teams may source against outdated production mix assumptions, increasing shortages or overbuying risk.

- Executive teams may overestimate scalability if pilot results were achieved under unusually clean or manually corrected data conditions.

This is why digital twin manufacturing should be assessed not only by technical success metrics, but also by decision dependency. A dashboard used once a month carries different risk than a model informing daily line balancing or weekly supplier allocations. The more frequently a twin influences action, the higher the cost of hidden data weakness.

A practical way to classify exposure

A simple exposure model can help leaders prioritize where to validate first before expanding the digital twin manufacturing footprint.

- Identify which decisions are made hourly, daily, weekly, and monthly using twin outputs.

- Rank source systems by freshness, completeness, and ownership clarity on a 1 to 5 scale.

- Flag high-frequency decisions supported by low-confidence data as immediate governance priorities.

- Review whether manual workarounds are masking underlying integration or model design issues.

Enterprises that perform this review early usually gain a better view of where digital twin manufacturing adds real control and where it currently introduces ambiguity. That distinction matters for investment timing, rollout sequencing, and executive confidence.

What stronger digital twin manufacturing programs are doing differently

The strongest programs are not waiting for perfect data before moving. Instead, they define what data must be trusted for each use case and build governance around that threshold. For example, a predictive maintenance twin may require high-frequency sensor quality and equipment history integrity, while a capacity-planning twin may depend more on routing accuracy, actual cycle time ranges, and shift-level production reporting.

This use-case discipline matters because digital twin manufacturing often fails when organizations try to solve every problem with one unified model before data readiness exists. A better path is staged reliability. In the first 90 days, companies may validate data lineage and naming standards for a limited asset scope. In the next 6 to 12 months, they can extend governance to multi-line or multi-site decision flows with measurable controls.

Another difference is the presence of operating rules around digital updates. High-performing teams establish triggers for when the model must be revised, who approves critical changes, how exceptions are logged, and what level of variance is acceptable before model outputs are paused or marked low confidence. These controls are not glamorous, but they are often the difference between a tool that informs and a tool that misleads.

Priority controls that improve trust

For enterprise decision-makers evaluating readiness, the following controls are usually more valuable than adding more visual features or broader simulation scope too early.

- A shared asset and process naming standard used consistently across MES, ERP, maintenance, and analytics systems.

- Defined freshness thresholds, such as sub-minute telemetry for condition monitoring and same-shift updates for production status.

- A data owner assigned for each critical source, with exception handling rules and escalation timing.

- Validation checkpoints comparing digital twin outputs with physical process outcomes at weekly or monthly intervals.

These controls create a realistic framework for scaling digital twin manufacturing. They also support better communication across procurement, operations, engineering, and executive management. Instead of debating whether the twin is “good” or “bad,” teams can discuss which use cases are decision-ready and which need stronger data discipline first.

A staged decision framework for leaders

Before expanding budget or scope, leaders can evaluate digital twin manufacturing maturity through a phased review model.

This framework helps leaders decide whether to optimize, pause, or expand. In digital twin manufacturing, restraint can be a sign of strategic maturity. Scaling too early often costs more than spending an extra quarter on data trust and governance design.

How enterprise decision-makers should respond over the next 12 months

The next phase of digital twin manufacturing will reward organizations that treat data trust as a board-level operational capability rather than a technical afterthought. For most enterprises, that means reviewing where digital models are already influencing spend, output, service levels, and risk decisions. If a twin affects production, inventory, maintenance, or customer fulfillment, it should be subject to the same discipline as other critical operating systems.

A practical response starts with prioritization. Leaders do not need to fix every dataset at once. They need to identify the top 3 to 5 decisions where digital twin manufacturing can create high value, then verify whether source data is trustworthy enough for those outcomes. In many businesses, the strongest first areas are bottleneck analysis, maintenance prioritization, energy-intensive process optimization, and supplier-linked production planning.

It is also important to align internal stakeholders early. Procurement, supply chain, engineering, quality, and IT teams should agree on what “decision-ready” means for each use case. This can include refresh frequency, acceptable variance ranges, escalation timing, and documentation rules. When those standards are explicit, digital twin manufacturing becomes easier to evaluate and harder to oversell internally.

Questions leaders should ask before the next investment decision

Before approving the next rollout phase, enterprise decision-makers should challenge assumptions with concrete operational questions rather than software-centered ones.

- Which decisions will this digital twin manufacturing deployment support, and how often are those decisions made?

- What percentage of required source fields is complete, validated, and refreshed within the needed time window?

- Where are manual corrections still required, and can they be reduced within the next 2 quarters?

- What is the fallback plan if model confidence drops during a production change, supply disruption, or system outage?

These questions shift the conversation from digital twin ambition to decision quality. That is where the market is heading. In the coming year, organizations that can prove data reliability, governance discipline, and use-case clarity will gain more value from digital twin manufacturing than those pursuing scale without operational trust.

Why informed market visibility matters

Because digital twin manufacturing sits at the intersection of operations, supply chains, and industrial technology, decision-makers need more than product marketing claims. They need credible insight into how data practices, system integration choices, and market shifts affect implementation risk. That is especially true in cross-border supply environments where lead times, component substitutions, and plant-level conditions change quickly.

TradeNexus Pro supports this need by helping enterprise leaders assess trend direction, supplier-side implications, and decision-relevant signals across advanced manufacturing, green energy, smart electronics, healthcare technology, and supply chain SaaS. If your team is evaluating digital twin manufacturing strategy, we can help you frame the right questions before the next investment cycle.

Why choose us for strategic guidance on digital twin manufacturing

TradeNexus Pro is built for enterprise buyers, supply chain managers, and senior decision-makers who need focused intelligence rather than broad, low-detail aggregation. Our coverage connects digital twin manufacturing to the wider forces shaping industrial competitiveness: supplier risk, manufacturing transformation, system interoperability, procurement timing, and operational resilience.

If your organization is deciding how to strengthen digital twin manufacturing without increasing data-related exposure, we can support deeper evaluation across practical areas such as parameter confirmation, rollout sequencing, integration dependencies, data-governance readiness, delivery timing, and use-case prioritization. We can also help your team compare different implementation paths against real operational constraints rather than generic digital transformation narratives.

Contact us to discuss your current digital twin manufacturing priorities, including platform selection criteria, supplier and system fit, data quality checkpoints, deployment scope, customized evaluation frameworks, and commercial planning. If you need support on roadmap definition, quote-stage comparison, or readiness assessment for multi-site adoption, our team can help you identify the most decision-relevant next steps.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.