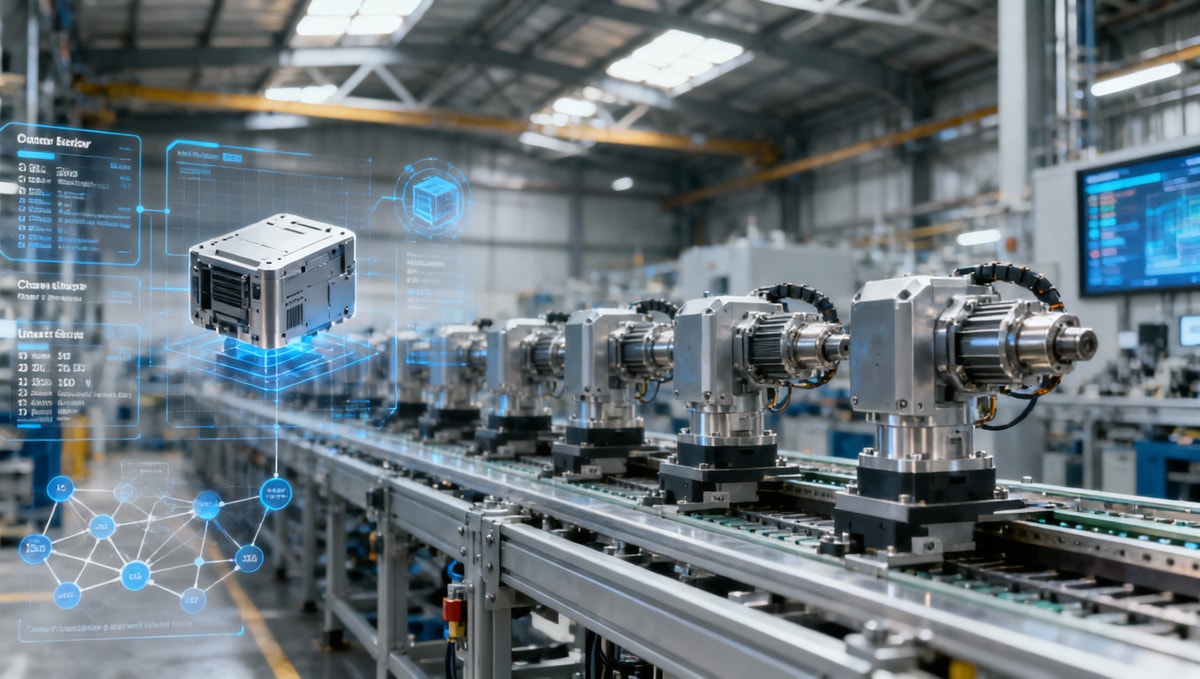

What Delays Smart Factory Solutions After Pilot Success?

Many manufacturers celebrate pilot wins, yet full-scale rollout often stalls when smart factory solutions meet legacy systems, budget cycles, cross-functional resistance, and unclear ROI metrics. For project managers and engineering leads, the real challenge is not proving the concept works, but aligning stakeholders, infrastructure, and execution plans to move from isolated success to enterprise-wide transformation.

Why do smart factory solutions slow down after a successful pilot?

A pilot usually runs inside a controlled zone: one line, one plant, one team, and a limited integration scope. That is exactly why smart factory solutions often look strong in the first 8–12 weeks. The environment is narrow enough to manage manually when exceptions appear, and stakeholders tolerate temporary workarounds because the goal is proof of value, not enterprise stability.

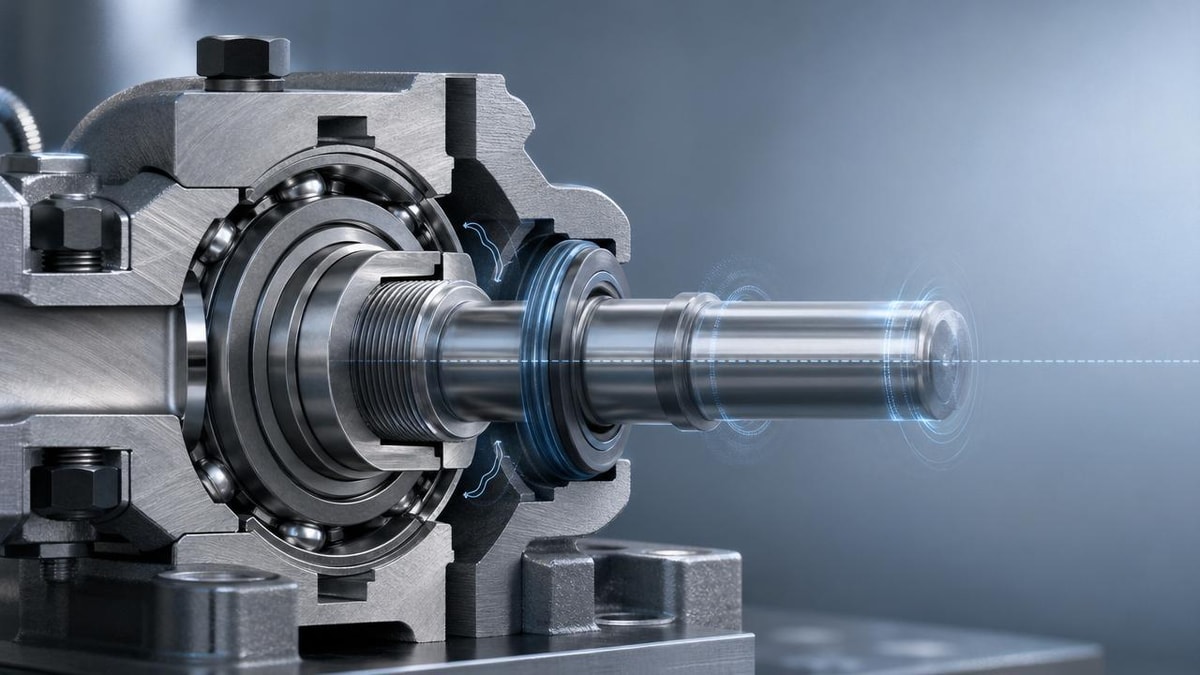

The delay begins when the project moves from demonstration to scale. A rollout may need to connect PLCs, MES, ERP, quality systems, warehouse workflows, and supplier data across 3–5 operational layers. At that point, the pilot’s technical success no longer answers the harder questions: who owns the data model, who funds the next phase, what happens during downtime, and which team accepts process redesign.

For project managers and engineering leads, the problem is rarely a single technology gap. It is a coordination gap between OT, IT, finance, procurement, maintenance, production, and leadership. When one team measures cycle time, another measures compliance risk, and a third focuses on capital expenditure, smart factory solutions can lose momentum even when the pilot KPIs were positive.

In cross-sector manufacturing environments, including electronics, healthcare technology, green energy equipment, and advanced assembly, the same pattern repeats. Pilots are approved as innovation projects, but scale-up requires operating-model change. That transition is where delays accumulate over 2–4 quarters, especially when no phased governance plan exists.

The four hidden shifts from pilot to rollout

- Scope shift: a proof-of-concept may cover one use case, but enterprise rollout often touches 6–10 workflows, from production monitoring to preventive maintenance and traceability.

- Risk shift: temporary manual oversight during a pilot is acceptable, while scaled operations need stable uptime, version control, and support ownership.

- Budget shift: pilot funding may come from innovation or plant-level budgets, while rollout usually competes with annual capex cycles and payback thresholds.

- Decision shift: a plant manager can approve a pilot quickly, but a multi-site deployment often needs procurement, cybersecurity, compliance, and executive sign-off.

This is why pilot success should never be treated as rollout readiness. The right question is not whether smart factory solutions work in principle. The right question is whether the organization is ready to absorb the operational, financial, and governance load that full deployment creates.

Where do rollout projects usually break: systems, people, budget, or metrics?

In most delayed programs, the root cause sits in one of four domains: architecture, change management, commercial planning, or value measurement. These are not isolated issues. They reinforce each other. If data models are unclear, reporting becomes inconsistent. If reporting is inconsistent, leadership questions ROI. Once ROI is questioned, budget approval slows and business teams disengage.

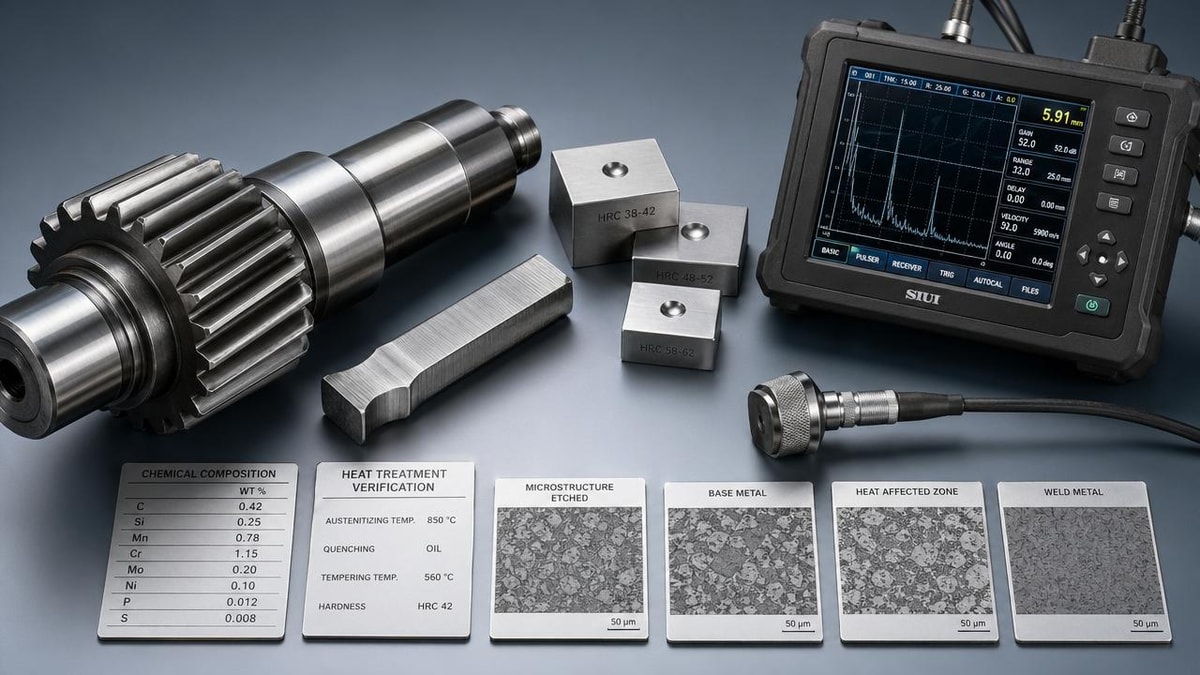

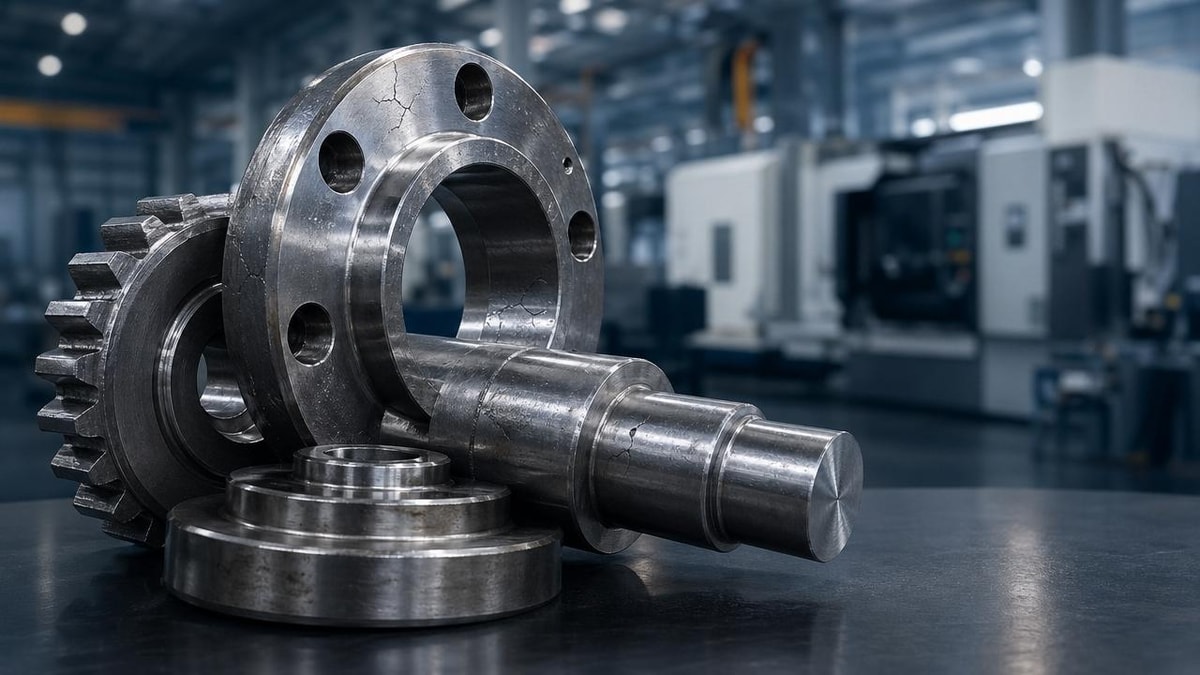

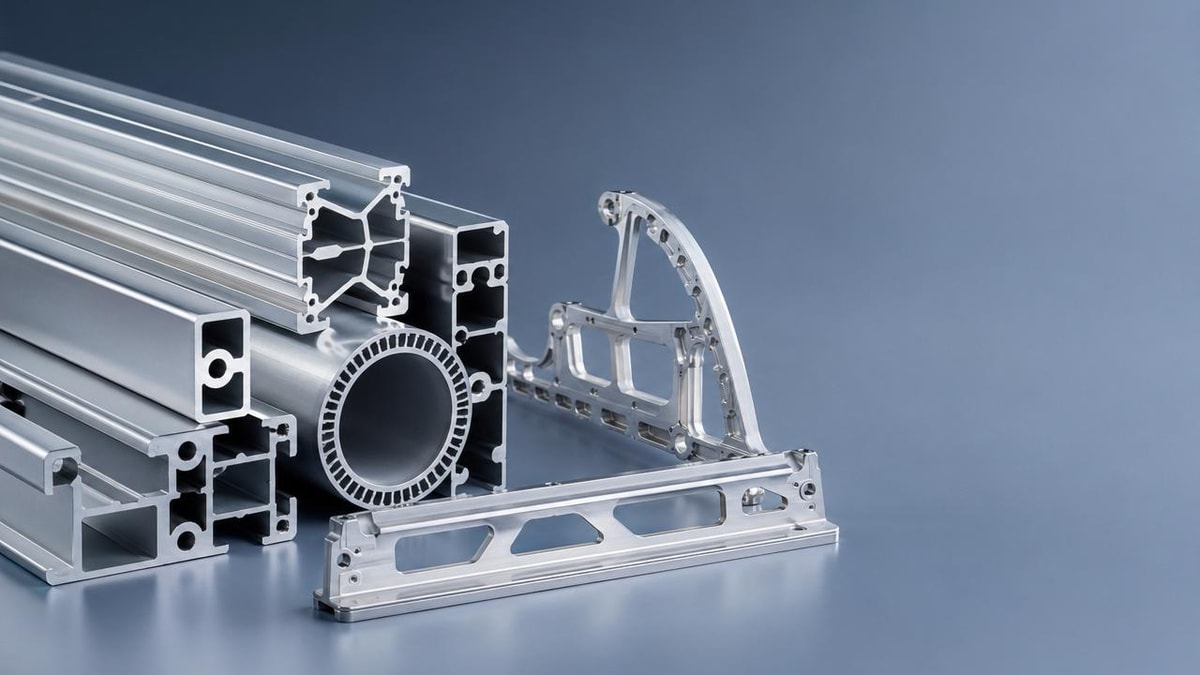

Legacy equipment is the most visible obstacle, but not always the largest one. A machine installed 10–15 years ago can often be connected through gateways or edge devices. The harder issue is usually semantic alignment. Different plants define downtime, scrap, OEE, and work order status differently. Smart factory solutions become difficult to scale when the enterprise has not standardized what success actually means.

Another common barrier is role ambiguity. OT may own the machine layer, IT may own networks and cybersecurity, and operations may own process outcomes. If ownership is not clarified during the first 30–45 days of scale planning, integration tasks drift. Every unresolved task then becomes a delay multiplier across vendors, internal teams, and site schedules.

The table below helps project leaders identify where smart factory solutions most often stall and what practical response is needed before launch gates are missed.

A useful pattern here is to treat rollout as an operating program, not an IT add-on. Once the project is structured around governance, ownership, and measurable outcomes, delays become visible earlier and are easier to resolve before they affect multiple sites.

What project leaders often underestimate

Data readiness is not the same as data availability

Many plants can access machine data, but that does not mean the data is normalized, time-aligned, or reliable enough for enterprise dashboards. Smart factory solutions depend on naming consistency, timestamp quality, and event logic. If these are weak, scaling creates more noise than visibility.

Stakeholder alignment must happen before procurement finalization

When procurement issues an RFP before OT, IT, quality, and operations agree on architecture and success criteria, vendor comparisons become superficial. The result is a technically acceptable selection that still delays implementation because internal expectations were never reconciled.

How should project managers evaluate smart factory solutions before scaling?

A pilot can justify interest, but scale-up requires a stricter evaluation model. For project managers, the decision framework should cover at least 5 dimensions: interoperability, deployment effort, support structure, security posture, and measurable business impact. If one of these is weak, the rollout timeline can expand from a planned 3 months to 9 months or more.

This matters across the broader industrial landscape because smart factory solutions are no longer used only for line monitoring. Buyers may expect traceability in healthcare technology, energy consumption control in green energy manufacturing, quality analytics in smart electronics, and multi-site visibility in supply chain SaaS-linked operations. One platform may look strong in one use case but weak in another.

Before scaling, teams should convert technical discussions into procurement-grade checkpoints. That means documenting protocol support, integration dependencies, validation steps, training needs, and service response assumptions. It also means clarifying whether the solution is intended for one facility, a regional cluster, or a global template.

The matrix below can be used during vendor shortlisting, internal governance reviews, or cross-functional design workshops for smart factory solutions.

A strong evaluation framework reduces two common errors: buying a platform that scales technically but not operationally, or rejecting a viable platform because internal requirements were never translated into objective checkpoints.

A practical selection checklist for engineering leads

- Confirm which assets must connect in phase 1, phase 2, and phase 3. Do not evaluate enterprise scope if the first deployment only needs 20–30 critical assets.

- Map mandatory systems integration: ERP, MES, CMMS, QMS, WMS, or historian. Each additional dependency affects timeline and validation effort.

- Set a minimum reporting baseline of 3–5 KPIs tied to financial or operational action, not just dashboard visibility.

- Estimate internal support hours after go-live. Many teams plan for implementation effort but not for the first 4–8 weeks of stabilization.

This is where TradeNexus Pro adds value. TNP helps decision-makers compare technology maturity, integration fit, supply chain implications, and market readiness across sectors, which is especially useful when a smart factory initiative spans multiple geographies, suppliers, and internal stakeholders.

What rollout model reduces delay, budget overruns, and stakeholder resistance?

The most reliable path is phased industrialization, not instant scale. Smart factory solutions move faster when organizations use a 3-stage model: standardize, replicate, then optimize. This creates governance gates and lowers risk without freezing progress. It also gives project managers a clearer structure for procurement, change management, and site-level accountability.

In stage 1, standardization, the team defines the reference architecture, KPI dictionary, cybersecurity review path, and implementation playbook. This often takes 4–8 weeks after the pilot, depending on the number of systems and plants involved. The output should be more than a slide deck. It should include role ownership, integration assumptions, and escalation routes.

In stage 2, replication, the solution is rolled out to 2–3 additional lines or one more facility. The purpose is not just expansion. It is controlled variation. If the solution works in a different product family, shift pattern, or maintenance environment, the organization learns what must remain standard and what can be localized.

In stage 3, optimization, the enterprise begins using smart factory solutions for broader outcomes such as energy efficiency, predictive maintenance, traceability, labor balancing, or supplier collaboration. By this point, the project has moved beyond software deployment and into operating performance management.

Implementation steps that prevent rollout drift

- Create a rollout charter with named owners from OT, IT, operations, quality, and finance before contract finalization.

- Use one decision log for scope changes, interface exceptions, and site-specific constraints. This avoids repeated debates across plants.

- Set acceptance criteria for each deployment wave, such as stable data capture over 2 consecutive weeks, user adoption thresholds, and documented support handoff.

- Plan training in layers: operators, supervisors, engineers, and support teams need different depth and timing.

Why budget models need redesign

Many delayed programs are approved with incomplete cost assumptions. Software is visible; engineering time is not. Connectivity hardware, network segmentation, validation, downtime coordination, and support coverage can materially change the cost profile. A 12-month view is usually more realistic than a single capex request tied only to license cost.

Why governance must be monthly, not ad hoc

Projects that review progress only at major milestones often discover issues too late. A monthly cadence with 6 core review items—scope, integration, user adoption, cybersecurity, budget, and KPI trend—helps teams correct execution while the rollout is still recoverable.

Common misconceptions, compliance considerations, and what to ask before you commit

One misconception is that if the dashboard is live, the project is successful. In reality, smart factory solutions create value only when data changes decisions or actions. If supervisors still rely on manual logs, if maintenance schedules remain reactive, or if quality interventions do not improve, the rollout is not yet mature.

A second misconception is that cloud versus on-premise is the main decision. It matters, but often less than access policy, data synchronization, latency tolerance, and support responsibility. In regulated or sensitive environments, teams may also need documented change control, auditability, or validation practices depending on product type and geography.

For cross-industry projects, compliance expectations can vary. Healthcare technology production may require stronger traceability discipline. Electronics manufacturing may emphasize process repeatability and test data linkage. Green energy equipment manufacturing may prioritize supplier visibility and lifecycle performance. Smart factory solutions should therefore be evaluated against the plant’s real compliance and operational context, not generic marketing claims.

TradeNexus Pro supports this decision process by connecting procurement leaders, engineering teams, and industrial strategists with deeper market intelligence. Instead of treating rollout obstacles as isolated technical incidents, TNP helps organizations interpret them through the wider lens of supplier capability, sector trends, deployment readiness, and long-term sourcing confidence.

FAQ for project managers evaluating smart factory solutions

How long does a post-pilot rollout usually take?

For a controlled expansion to one additional line or area, 6–12 weeks is common if integration scope is moderate and internal ownership is clear. A multi-site program can take 2–4 quarters, especially when ERP, MES, quality systems, and cybersecurity reviews must be aligned across regions.

Which KPIs matter most when scaling smart factory solutions?

Start with 3–5 KPIs that link to action: unplanned downtime, first-pass yield, scrap rate, changeover duration, and maintenance response time are common examples. The key is consistency. If sites define these differently, leadership reporting becomes weak and rollout confidence declines.

What should procurement verify before signing?

Verify integration scope, support boundaries, training coverage, upgrade responsibilities, and the commercial treatment of new sites, assets, or users. Also confirm what is excluded. Many rollout delays come from assumptions around device commissioning, middleware work, or validation tasks that were never priced or owned.

Can legacy facilities still use smart factory solutions effectively?

Yes, often they can, but success depends on selective scope and realistic architecture. Older facilities may require edge devices, phased connectivity, or manual-to-digital transition steps. The goal is not full digital perfection on day one. It is reliable visibility and actionable control where the business case is strongest.

Why work with TradeNexus Pro when planning or scaling smart factory solutions?

When projects move beyond pilot success, decision quality matters as much as technology quality. TradeNexus Pro helps project managers, engineering leads, procurement teams, and enterprise stakeholders assess smart factory solutions with deeper sector context across advanced manufacturing, green energy, smart electronics, healthcare technology, and supply chain SaaS.

That means you can use TNP to compare integration readiness, understand supplier positioning, evaluate operational fit, and sharpen rollout assumptions before internal approvals stall. For organizations managing international sourcing, multi-plant deployments, or technically complex vendor shortlists, this intelligence reduces uncertainty at the stage where most delays usually begin.

If your team is evaluating smart factory solutions after a successful pilot, you can engage TradeNexus Pro around specific next-step needs: architecture and use-case benchmarking, vendor screening, deployment sequencing, expected delivery windows, compliance considerations, service model questions, and cross-functional decision support.

Contact TradeNexus Pro to discuss rollout planning, solution selection, integration risk review, implementation phasing, supplier comparison, or budget-scoping assumptions. If you need support clarifying parameters, validating fit across sites, preparing for internal procurement review, or structuring a more credible enterprise business case, TNP provides a stronger starting point than generic market overviews.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.