Automated Optical Inspection or Manual Inspection for Small Batches

For manufacturers handling limited production runs, choosing between automated optical inspection and manual inspection can directly affect cost, speed, and quality consistency. Small batches often demand flexibility, but they also leave little room for error. This article explores how enterprise decision-makers can evaluate the right inspection strategy based on product complexity, labor efficiency, defect detection accuracy, and long-term operational value.

In sectors such as advanced manufacturing, smart electronics, healthcare technology, and precision assemblies, inspection is no longer a back-end checkpoint. It is a strategic control point that shapes scrap rates, customer complaints, delivery performance, and compliance outcomes. For decision-makers managing low-volume, high-mix production, the question is not simply whether automated optical inspection is better than manual inspection, but when each approach creates measurable business value.

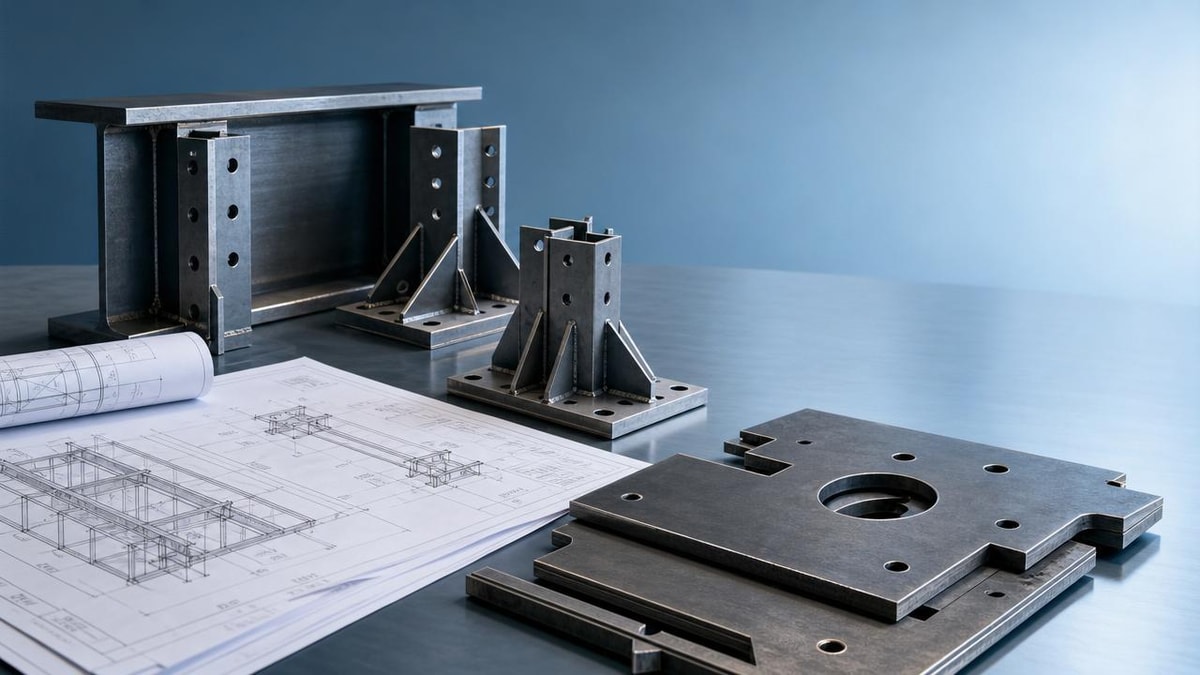

The right choice depends on at least 4 variables: defect type, setup frequency, labor skill availability, and the cost of a missed nonconformity. A small batch of 50 medical device housings has a different inspection profile than 500 PCB panels or 200 customized energy control boards. Understanding those differences helps procurement leaders and operations managers avoid overinvestment on one side and quality risk on the other.

Why Small-Batch Inspection Requires a Different Decision Model

Small-batch production typically involves shorter runs, more product changeovers, and tighter engineering feedback loops. In many factories, a “small batch” may range from 20 to 500 units, depending on the product category. That scale changes the economics of inspection. A system that performs well in runs of 10,000 units may deliver weak returns when setup time consumes 20–30% of available production hours.

Manual inspection remains common because it adapts quickly. Trained inspectors can review cosmetic defects, labeling errors, connector orientation, or assembly completeness without waiting for program creation. For pilot runs, engineering validation lots, or custom orders, this flexibility matters. However, manual inspection also introduces operator fatigue, variable judgment, and inconsistent defect capture after 2–3 hours of repetitive work.

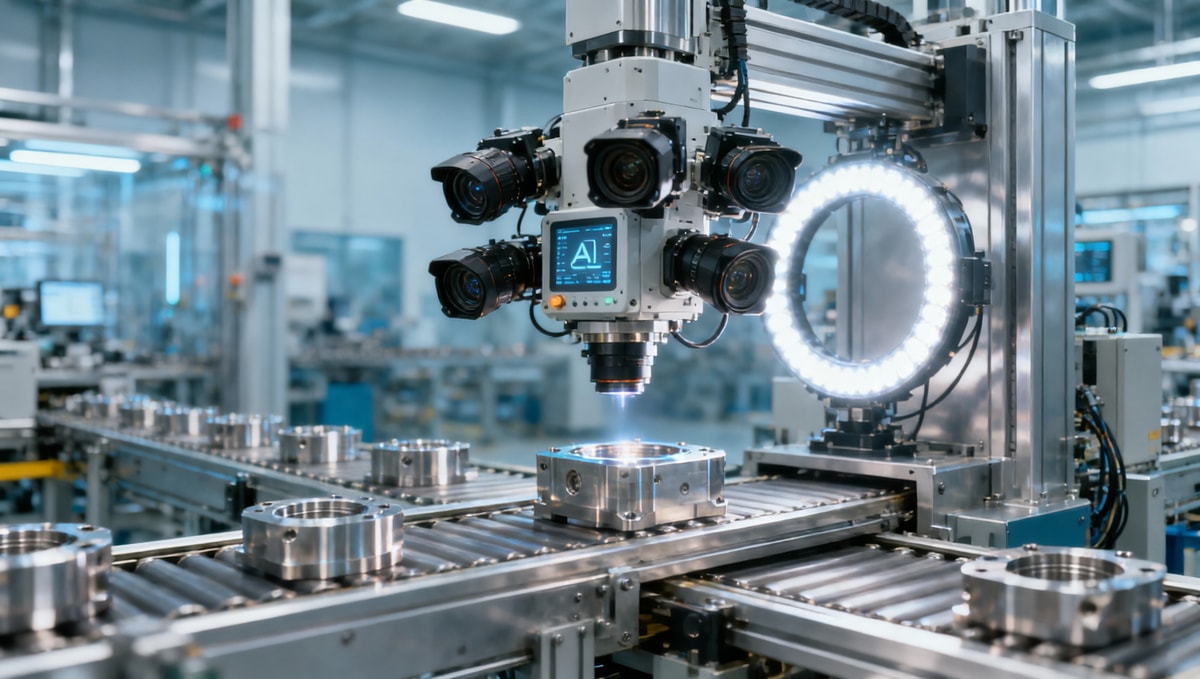

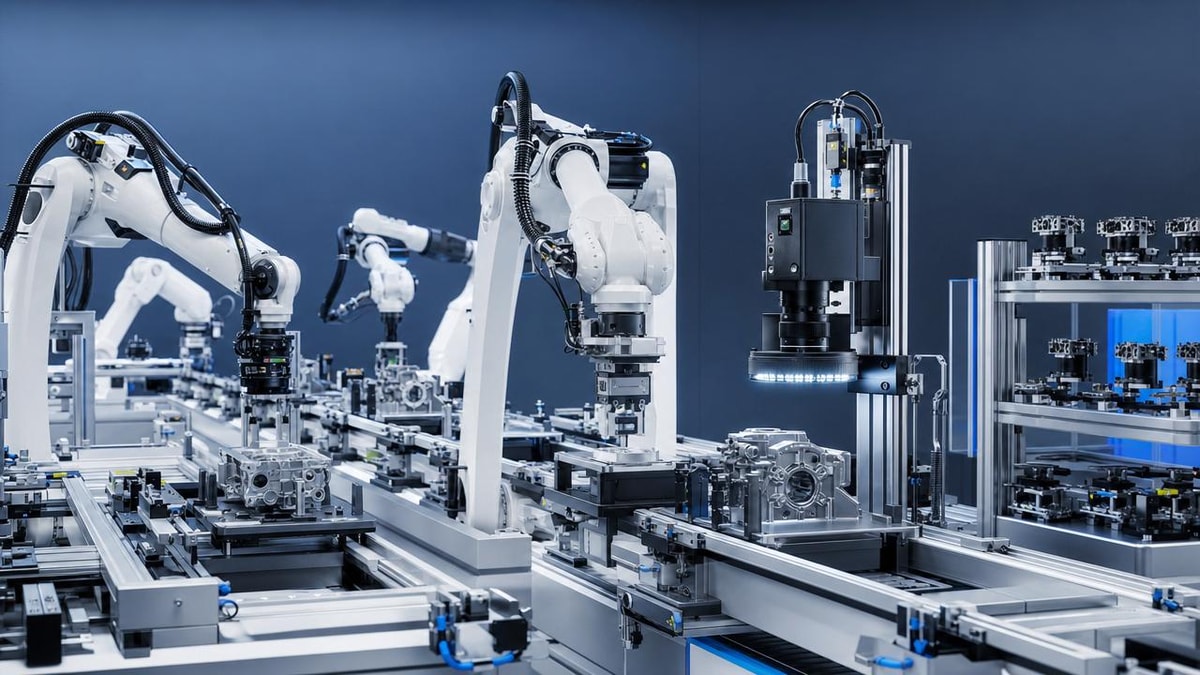

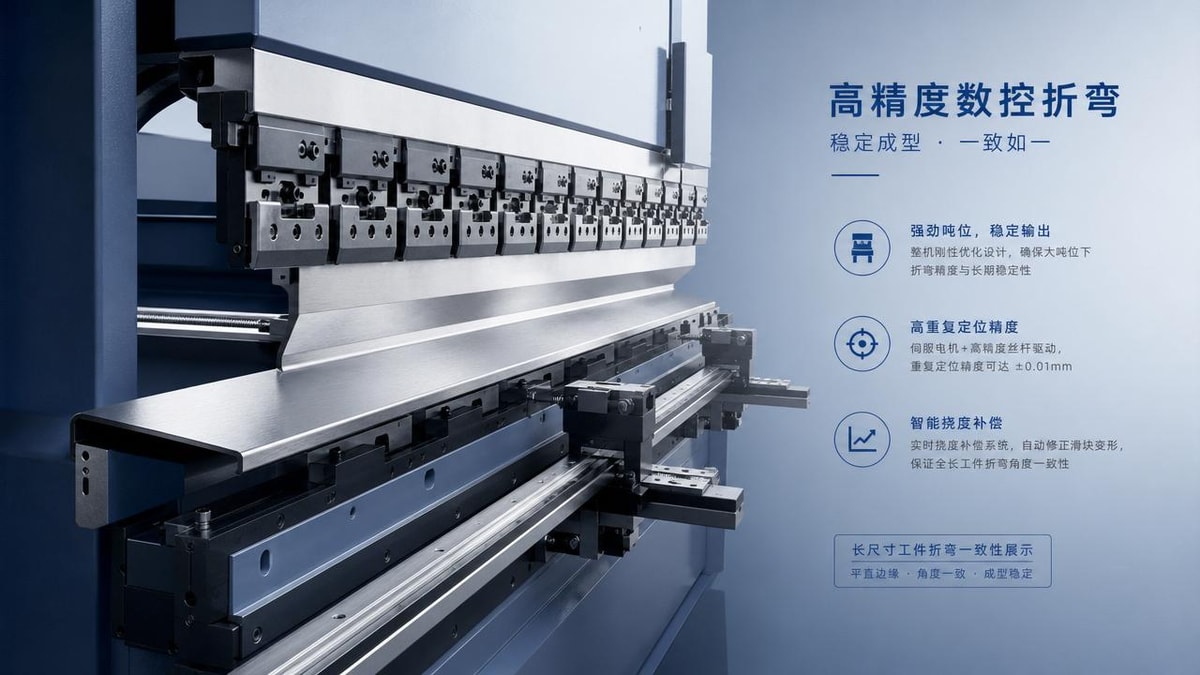

Automated optical inspection, by contrast, offers repeatability and traceability. Once parameters are set, the system can inspect solder joints, component placement, polarity, missing parts, dimensional features, and surface anomalies with a stable rule set. In high-mix operations, though, programming time, fixture requirements, and image library maintenance can erode the expected efficiency gains unless there is enough part-family similarity across batches.

For enterprise buyers, the practical decision starts with total process impact rather than equipment price alone. A lower-cost manual process can become expensive when rework exceeds 3–5%, customer returns rise, or premium labor must be allocated to routine visual checks. Likewise, an automated optical inspection platform can be difficult to justify if each new SKU requires hours of recipe tuning for lots under 100 units.

Key operational pressures in low-volume manufacturing

- Frequent product changeovers, often 3–10 times per week in contract manufacturing environments.

- Limited tolerance for defects because replacement production may take 7–15 days.

- Higher value per unit, especially in medical, industrial control, or customized electronics assemblies.

- Engineering changes that can make inspection criteria obsolete within 1 or 2 production cycles.

Automated Optical Inspection vs Manual Inspection: Core Trade-Offs

Before selecting a method, decision-makers should compare the two approaches across cycle time, repeatability, detection capability, and operating cost. Automated optical inspection often performs best when defect patterns are visible, rule-based, and recurring. Manual inspection performs best when product variation is high, acceptance criteria are still evolving, or the lot size does not justify digital setup and validation effort.

In practical terms, AOI is strong at checking known defect classes at a stable pace. Manual inspection is strong at contextual judgment, especially when defects are subtle, unusual, or mechanically complex. The difference is especially important in mixed-sector operations where one line may process PCB assemblies, machined subassemblies, and labeled packaging components within the same 5-day production window.

The table below highlights where each method typically creates the most value in small-batch environments.

The key conclusion is that automated optical inspection is not automatically the better option for every small batch. It becomes attractive when the same visual rules apply across multiple lots, when defect costs are high, or when customer audits demand image-based traceability. Manual inspection remains competitive where lot sizes are small, product revisions are frequent, and defect classification still requires human interpretation.

When automated optical inspection usually gains an edge

AOI tends to deliver stronger returns in 3 situations. First, when a plant runs several related SKUs with common geometries or component libraries. Second, when inspection records must be stored for 12–36 months for customer or regulatory review. Third, when defects are repetitive enough that false-call rates can be reduced through ongoing tuning rather than constant human judgment.

Where manual inspection still outperforms automation

Manual inspection remains effective for startup production, customized industrial assemblies, and very low annual volumes. If a line processes fewer than 1,000 units per SKU per year and engineering changes happen every quarter, maintaining AOI programs may create more indirect work than expected. In these cases, standard work instructions, error catalogues, and layered quality review can often improve manual results without large capital spend.

Cost, Labor, and Throughput: What Decision-Makers Should Measure

A common mistake is to compare inspection methods using direct labor alone. The stronger model includes at least 5 cost layers: setup time, inspection cycle time, false rejects, escaped defects, and engineering maintenance. In low-volume operations, these hidden variables often determine whether automated optical inspection improves margins or simply shifts work from inspectors to programmers and quality engineers.

For example, manual inspection may require 1 inspector for every 150–300 units per shift, depending on complexity. AOI can inspect many more units per hour once it is ready, but recipe creation and first-article validation may consume 1–4 hours for a new build. If the lot is only 80 units, the time savings may never materialize. If the same part returns 10 times per quarter, the investment profile changes significantly.

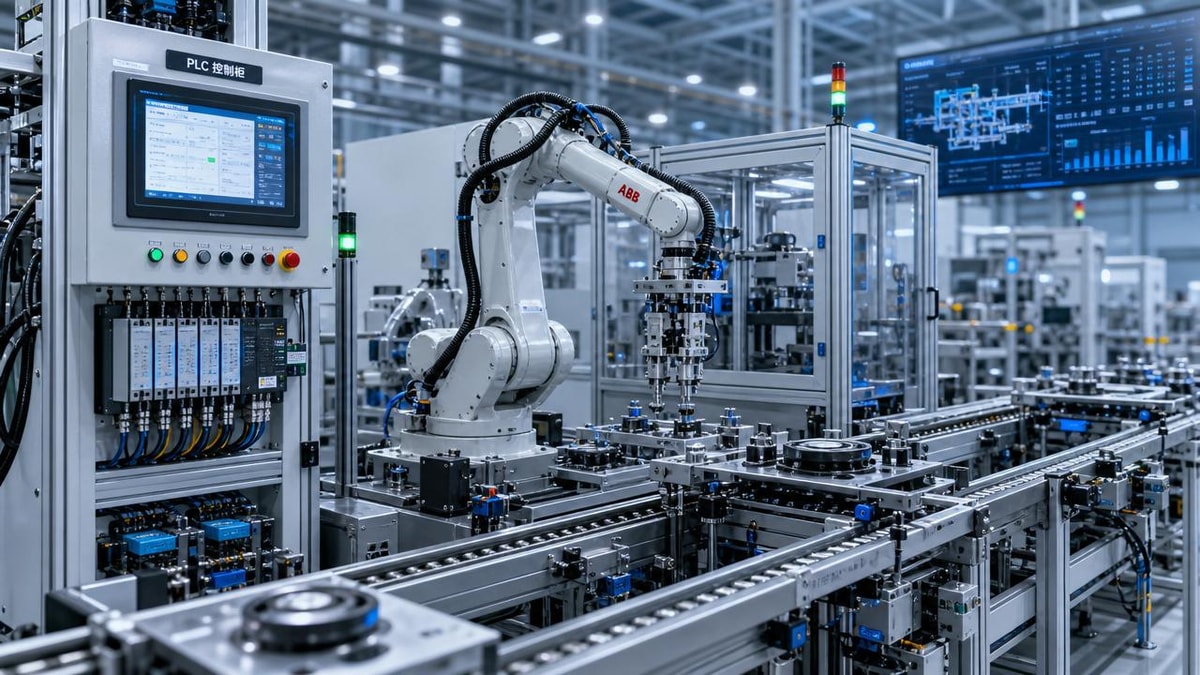

Labor availability is another board-level concern. Many manufacturers face experienced inspector shortages, and retraining cycles can take 4–8 weeks before visual standards are applied consistently. Automated optical inspection can reduce dependency on a small pool of senior personnel, but only if there is enough internal capability to manage lighting conditions, image thresholds, false-call tuning, and equipment upkeep.

A practical decision matrix for cost and efficiency

The following table can help procurement and operations teams structure the business case before approving capital equipment or redesigning labor allocation.

This matrix does not eliminate the need for a pilot, but it narrows the decision. In many small-batch factories, the answer is not a pure either-or model. A hybrid process often delivers the best economics: automated optical inspection for known, repetitive checks and manual inspection for first articles, final workmanship review, or exception handling.

Metrics worth reviewing over a 90-day trial

- False reject rate by SKU and by operator shift.

- Average setup time for new programs or revised work instructions.

- Defect escape rate per 1,000 units shipped.

- Rework hours caused by inspection misses or overcalls.

- Customer complaint frequency over 8–12 weeks after shipment.

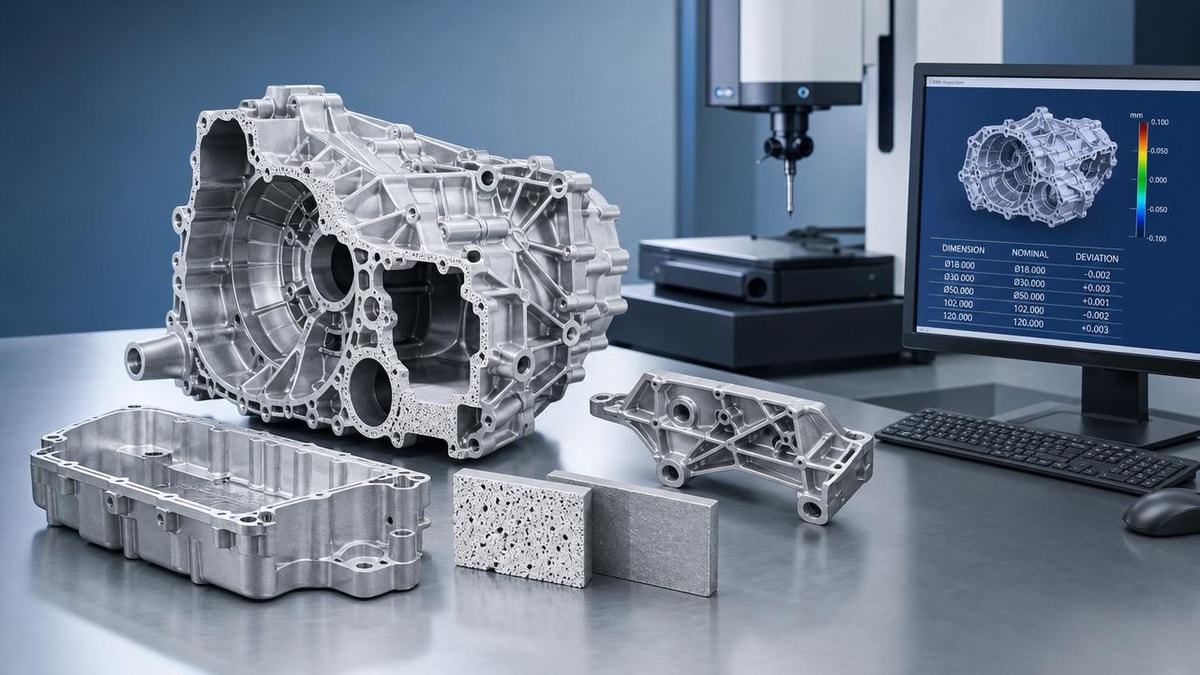

How to Match Inspection Strategy to Product Complexity and Risk

Product complexity should drive inspection architecture. A simple branded enclosure with low cosmetic sensitivity does not need the same method as a dense PCB assembly, a battery management board, or a healthcare sensing module. The more the product depends on fine-pitch placement, orientation integrity, solder quality visibility, or label traceability, the more attractive automated optical inspection becomes, even in modest quantities.

Risk level is equally important. In healthcare technology, industrial safety controls, and energy management devices, a single escaped defect can trigger delayed installation, expensive field service, or contractual penalties. In such cases, inspection decisions should be tied to consequence of failure, not just unit count. A lot size of 60 can still justify automation if each unit supports a critical application and post-delivery correction is difficult.

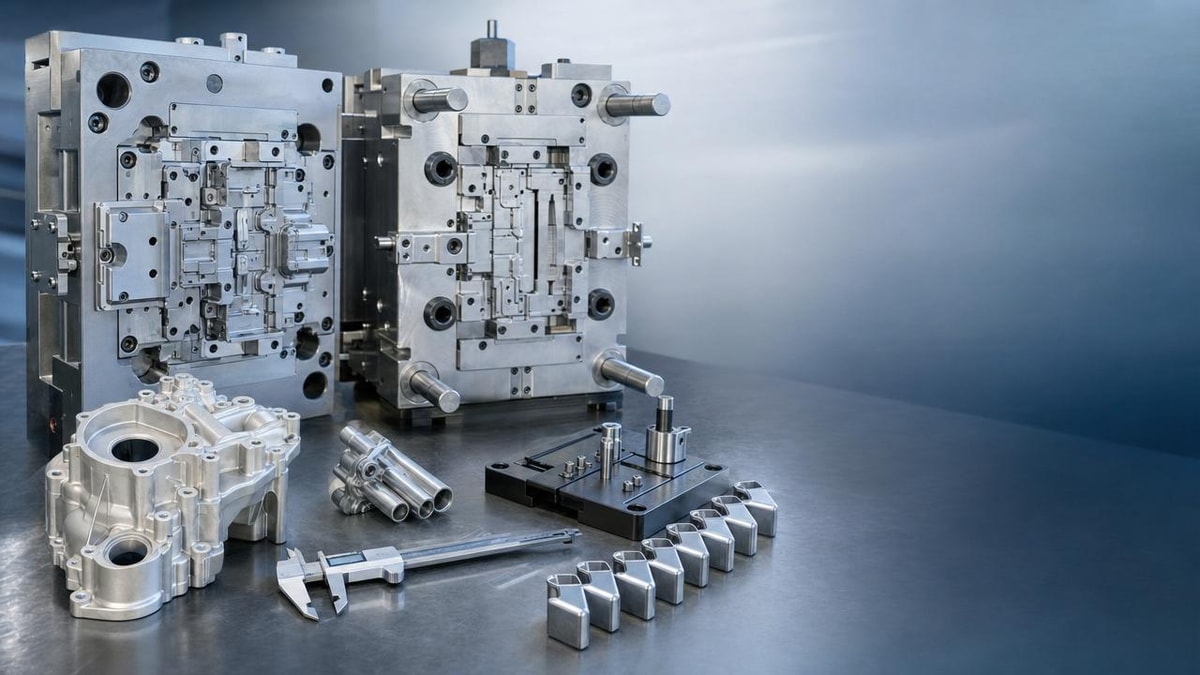

At the same time, not all defects are machine-friendly. Reflection, variable surface finishes, transparent materials, hand-applied adhesives, or non-standard cable routing can reduce AOI reliability unless the station is carefully engineered. Decision-makers should therefore separate “visible and rule-based” defects from “contextual and judgment-based” defects before selecting equipment or staffing plans.

Three tiers of inspection suitability

A useful framework is to classify products into 3 tiers. Tier 1 includes stable, repeatable visual checks such as polarity, presence, and alignment. Tier 2 includes mixed cases where AOI can screen most features, but human review is still needed for 10–20% of exceptions. Tier 3 includes highly customized or visually inconsistent products where manual inspection remains the lead method.

Questions to ask before choosing AOI for small batches

- Can at least 70–80% of expected defects be described in objective visual rules?

- Will the same inspection recipe be reused across future batches within 3–6 months?

- Are lighting conditions, part orientation, and fixturing stable enough for repeatable imaging?

- Does the organization have engineering capacity for recipe maintenance and validation?

If the answer is “no” to most of these questions, manual inspection or a staged hybrid model is usually safer. If the answer is “yes” to at least 3 out of 4, automated optical inspection deserves serious consideration, especially where quality records, labor efficiency, and customer consistency are central to commercial performance.

Implementation Models, Common Mistakes, and a Smarter Hybrid Approach

The most successful manufacturers do not treat inspection as a binary choice. They build layered control strategies. A typical model uses manual review during new product introduction, first-article approval, and process change events, then shifts repetitive checks into automated optical inspection as the product stabilizes. This phased approach reduces investment risk while preserving flexibility during the first 30–90 days of a program.

One common mistake is buying AOI to solve a process discipline problem. If upstream assembly variation is high, board support is inconsistent, or work instructions change without version control, the system may generate excessive false calls. Another mistake is relying entirely on manual inspection for mature products with repeatable defect patterns, where labor hours continue rising while consistency declines over time.

A better implementation plan starts with defect mapping. Identify the top 5–10 recurring defects, estimate their escape cost, and determine which can be reliably seen by camera-based inspection. Then run a pilot on a representative product family rather than the most difficult or the simplest SKU. This produces decision-quality data within 6–8 weeks without forcing a full-line conversion.

Recommended rollout sequence

- Define critical defect categories and acceptance criteria with quality, engineering, and operations teams.

- Select 1 product family with recurring builds and moderate visual complexity.

- Measure manual baseline performance for 2–4 weeks, including labor hours and defect escapes.

- Pilot automated optical inspection or hybrid review for 30–60 days.

- Standardize the final inspection route based on cost per accepted unit and risk reduction.

FAQ: What leaders often ask before approving an inspection strategy

How small is too small for automated optical inspection? There is no universal cutoff, but batches below 50 units are often harder to justify unless defect risk is very high or the same recipe will be reused repeatedly. For regulated or high-value products, even smaller lots may justify AOI if traceability requirements are strict.

Can manual inspection reach the same quality level? It can in certain conditions: stable lighting, clear work instructions, calibrated samples, and well-trained inspectors. However, consistency usually declines when inspection time is long, shifts are extended, or defect criteria rely on subtle repetitive pattern recognition.

Is a hybrid model harder to manage? It requires more planning, but often produces the best balance. Many manufacturers use manual inspection for first articles and subjective workmanship checks, while automated optical inspection screens repeatable visual defects. This reduces both labor strain and overreliance on machine rules.

What should procurement teams ask suppliers? Focus on recipe creation time, support response windows, false-call tuning process, training scope, maintenance intervals, and how the system handles product revision changes. These factors often matter more than the quoted machine speed in low-volume settings.

For enterprise decision-makers, the best inspection strategy for small batches is rarely based on ideology. It is based on process fit, defect economics, and operational repeatability. Automated optical inspection creates strong value when defect rules are stable, traceability matters, and similar products recur often enough to amortize setup effort. Manual inspection remains valuable where complexity is fluid, judgment is essential, and flexibility outweighs throughput.

If your organization is evaluating inspection options across advanced manufacturing, smart electronics, healthcare technology, or other precision-driven B2B operations, a structured comparison can prevent unnecessary capital expense and reduce downstream quality risk. TradeNexus Pro helps decision-makers assess solution fit, supplier capability, and implementation priorities with sector-specific market intelligence. Contact us to get a tailored evaluation framework, discuss inspection trade-offs, or explore more practical quality-control solutions for small-batch production.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.