Machine Vision Systems in Harsh Environments: What Fails First

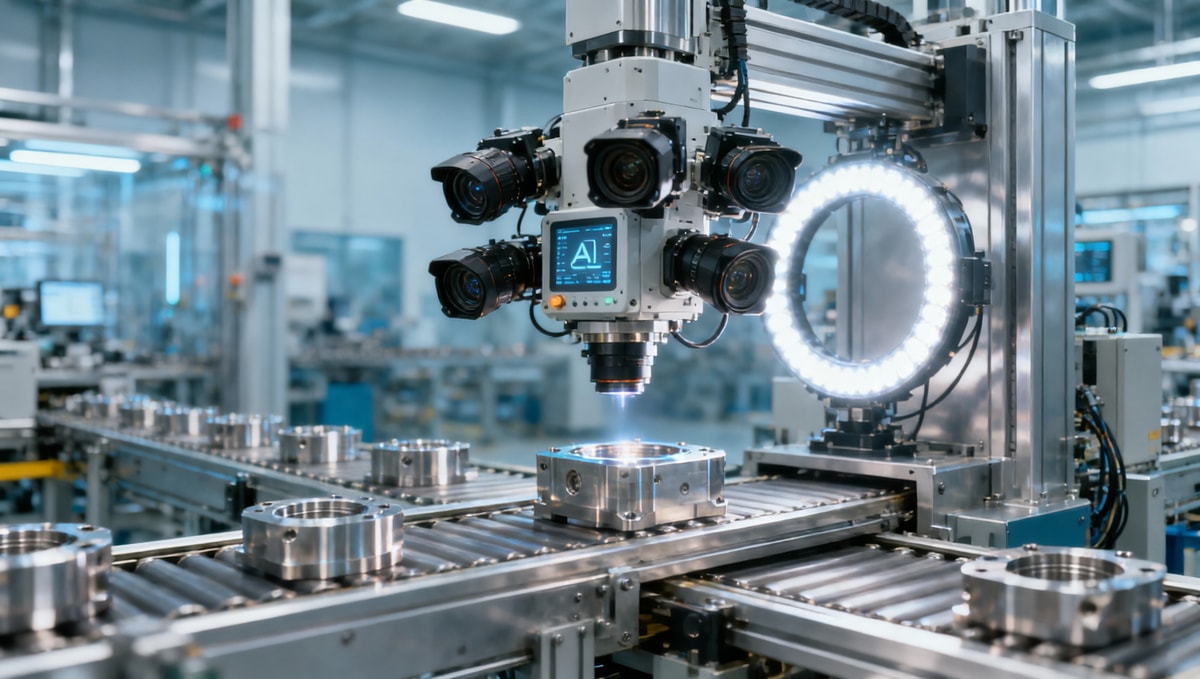

In harsh industrial settings, machine vision systems rarely fail all at once. More often, performance drops start with optics contamination, unstable lighting, cable fatigue, or thermal stress—issues that directly affect inspection accuracy and workplace safety. For quality control and safety managers, understanding what breaks first is the key to preventing false rejects, missed defects, and costly downtime.

Why do machine vision systems fail gradually instead of all at once?

In most factories, machine vision systems do not stop working in a dramatic, single-event way. Performance usually degrades in layers. A camera may still trigger correctly while image contrast drops by 15% to 30%. Illumination may still turn on while flicker increases enough to distort edge detection. Cables may still pass signal but begin to intermittently lose data during vibration or temperature swings. For quality control teams, this means the first warning sign is often not machine stoppage, but inspection inconsistency.

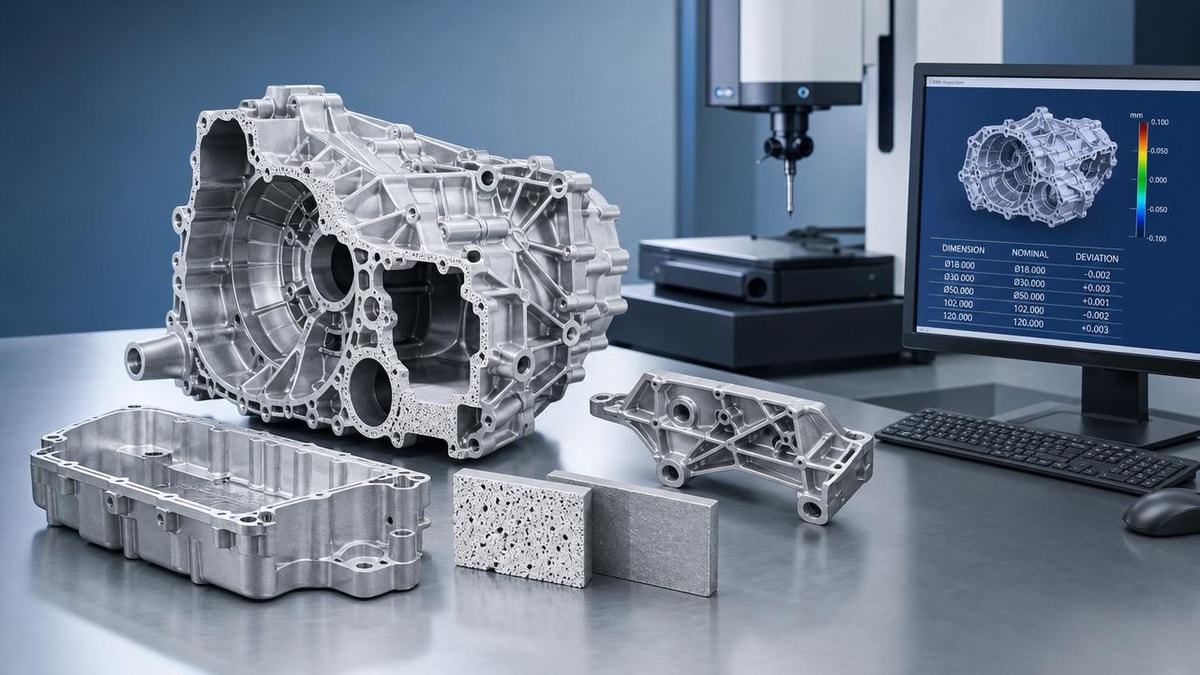

This gradual failure pattern matters because visual inspection systems sit at the intersection of production yield and risk control. A small change in optical clarity can increase false rejects on cosmetic defects. A slight timing drift can allow defective parts to pass downstream. In food processing, electronics assembly, heavy manufacturing, and warehouse automation, even a few hours of unstable inspection can affect hundreds or thousands of units depending on line speed.

The reason harsh environments accelerate this pattern is simple: contaminants, heat, moisture, washdown, dust, oil mist, shock, and electromagnetic noise do not attack every component equally. They target the most exposed and weakest links first. In many deployments, the first 3 to 6 months reveal recurring stress points long before complete failure appears on maintenance logs.

Which parts are usually stressed first?

The first stressed components are usually external or semi-exposed elements: lenses, protective windows, lighting assemblies, connectors, and moving cable sections. These parts face contamination, mechanical strain, cleaning chemicals, and thermal cycling every shift. By contrast, processors or embedded controllers often fail later unless they are housed poorly or exposed to sustained overheating above typical industrial operating ranges.

- Optics lose performance through dust, oil film, scratches, and condensation.

- Lighting degrades through heat buildup, voltage instability, and lens cover contamination.

- Cables and connectors weaken through repeated flexing, vibration, and ingress.

- Enclosures and seals fail when gaskets age under washdown, UV exposure, or chemical contact.

- Thermal management declines when vents clog or ambient temperatures stay elevated for 8 to 16 hours per day.

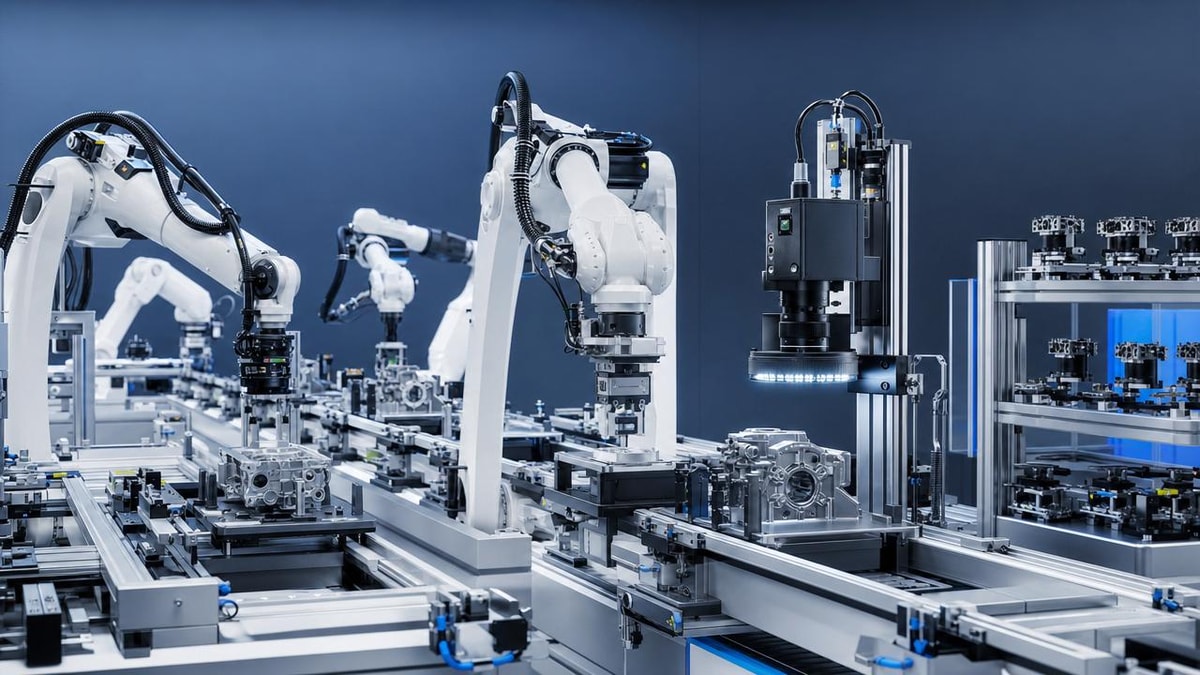

For safety managers, this pattern is important because machine vision systems are often tied to robotic guidance, presence verification, hazardous area monitoring, and safe material flow. A system that appears operational at a glance may already be operating outside acceptable detection margins. That gap between “running” and “reliable” is where many avoidable incidents begin.

What does “soft failure” look like on the line?

Soft failure usually appears as rising inspection variability rather than hard alarms. You may see retest rates increase from 1% to 4%, operator overrides become more frequent, or defect classifications drift between shifts. These are operational clues that machine vision systems are no longer seeing the same scene with the same stability, even if uptime dashboards still show green.

What fails first in harsh environments: optics, lighting, cables, or electronics?

If the question is asked in practical maintenance terms, optics and lighting are usually the first visible points of failure, while cables and connectors are the most common hidden causes of intermittent faults. Electronics can also fail, but they are not always first unless ambient temperatures, enclosure design, or moisture exposure are severe. In dusty plants, foundries, cold storage, washdown lines, and outdoor yards, the order of failure often follows exposure intensity rather than component price.

For example, a lens cover exposed to airborne oil can lose enough clarity within 2 to 4 weeks to reduce contrast on reflective parts. An LED light bar in a hot enclosure may maintain output for months but shift in uniformity or color temperature before complete burnout. A cable routed near moving tooling may pass commissioning but develop micro-fractures after 500,000 to 2 million flex cycles. Each issue affects image reliability differently, so knowing the failure sequence helps prioritize inspection and spare parts planning.

The table below gives a practical comparison of what quality and safety teams should monitor first when machine vision systems operate in harsh conditions.

The practical takeaway is that the cheapest visible part is often the first source of expensive downstream problems. Many machine vision systems are replaced or reprogrammed too early when the root issue is actually contamination, cable fatigue, or unstable lighting. That is why frontline diagnosis should begin with exposure-driven components before assuming software or algorithm failure.

Are optics really the most common first failure point?

In many inspection cells, yes. Optics are passive, but they are highly vulnerable. A transparent cover can appear clean to the naked eye while reducing image quality enough to affect low-contrast defect detection. In stamping, machining, packaging, and battery assembly, tiny contamination layers often cause the first measurable drop in vision performance. This is especially true where tolerances are below 0.5 mm or where reflective surfaces require stable contrast.

For harsh environments, protective design matters as much as the camera itself. Air knives, lens shrouds, isolated lighting, sealed housings, and anti-condensation measures often extend stable inspection windows more effectively than changing to a higher-resolution sensor alone.

How can quality control and safety managers tell when machine vision systems are degrading?

The clearest signal is not always alarm frequency. It is trend deviation. When machine vision systems begin to degrade, three patterns usually appear: rising false reject rates, widening measurement variation, and growing dependence on manual confirmation. If a line that normally runs with under 1.5% visual review suddenly needs 3% to 5% manual intervention, the issue may be environmental drift rather than product change.

Safety managers should also watch for delay-related symptoms. If the system begins to lag during peak temperature periods, or if presence checks become inconsistent during vibration-heavy shifts, those are early indicators of unstable performance. In robotic cells, even millisecond-level trigger inconsistency can create compounding positioning or verification errors, especially at throughputs above 40 to 60 parts per minute.

A disciplined baseline program helps. Capture reference images, monitor illumination output, record enclosure temperature ranges, and log communication errors weekly or monthly depending on line criticality. Harsh-environment machine vision systems benefit from visual baselining because many faults become obvious only when current images are compared against startup conditions.

Which indicators should be on a routine checklist?

Below is a practical checklist structure that works across multiple sectors, from advanced manufacturing to healthcare packaging and logistics automation.

- Image clarity trend: compare current images against baseline every 7 to 30 days.

- Light stability: check for brightness loss, hot spots, or flicker during each preventive maintenance cycle.

- Thermal condition: log enclosure and ambient temperature, especially if peaks exceed typical equipment ranges.

- Connector condition: inspect seals, locking rings, and signs of corrosion or vibration loosening.

- Error pattern review: distinguish random faults from recurring faults tied to time, shift, or cleaning cycles.

- Manual override frequency: track how often operators bypass machine vision decisions.

These indicators are valuable because they turn a vague complaint like “the camera is acting up” into a measurable maintenance path. That improves communication between quality, safety, engineering, and procurement teams.

What is a useful review interval?

For critical lines, a light-touch review every 7 days and a deeper condition check every 30 days is often realistic. In very harsh areas such as washdown zones, grinding cells, or outdoor loading points, daily optics checks and monthly cable inspection are usually justified. The review interval should match contamination rate, line speed, and the cost of a bad pass or false reject.

What are the most common mistakes when selecting machine vision systems for harsh environments?

One common mistake is focusing on resolution first and environmental resilience second. A high-megapixel camera does not solve contamination, moisture ingress, or unstable lighting. Another mistake is assuming the protection level of the camera body alone defines the reliability of the full machine vision system. In reality, the lens mount, connector sealing, illumination housing, cable routing, and enclosure ventilation often determine field performance more than the core imaging sensor.

A second mistake is underestimating maintenance access. Teams sometimes specify protective housings that are difficult to clean or inspect, turning a 3-minute wipe-down into a 25-minute stoppage. That increases the chance that operators skip routine care. In harsh environments, cleanability and inspectability are not convenience features; they are uptime features.

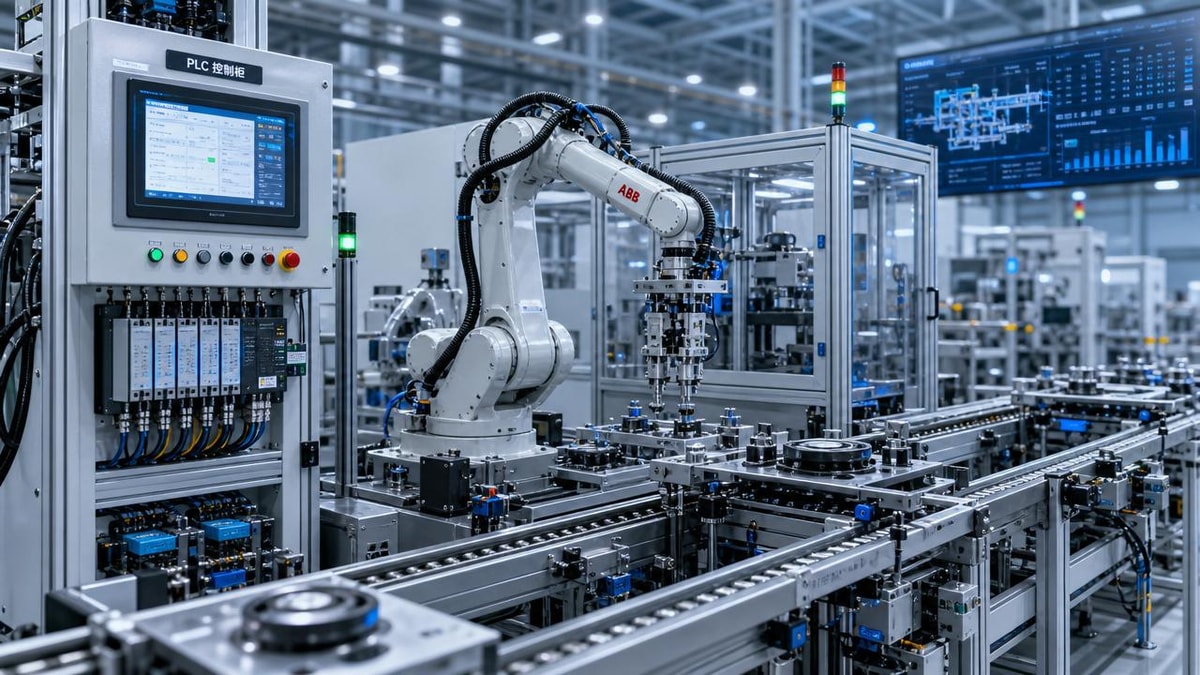

A third mistake is failing to align machine vision systems with actual plant stressors. A line exposed to coolant mist needs different protection priorities than a cold-room conveyor or a high-vibration press line. Environmental mapping should include temperature range, ingress risk, cleaning method, shock exposure, cable movement, and nearby electromagnetic noise sources before final selection.

What should buyers compare before purchase?

When comparing machine vision systems, procurement and engineering teams should look beyond nominal camera specifications. The next table is a practical evaluation guide for cross-functional review.

This type of comparison helps prevent specification bias. A machine vision system that looks excellent in a lab may underperform on a line exposed to washdown every shift or vibration for 20 hours per day. The best decision usually comes from matching exposure conditions to serviceability and failure tolerance, not from image resolution alone.

Should teams ask about standards and compliance?

Yes, especially where safety, hygiene, or electrical reliability matter. It is reasonable to ask how the system aligns with common industrial expectations for ingress protection, electrical safety, EMC considerations, and sanitary or washdown suitability where relevant. The goal is not to chase labels blindly, but to confirm that the proposed machine vision systems match the actual duty cycle and site conditions.

How can teams extend the life of machine vision systems without overspending?

The most cost-effective approach is early prevention on the first-failing components. In many facilities, adding a better protective window, improving cable strain relief, isolating lights from direct spray, or reducing enclosure heat yields more value than replacing the entire machine vision system. Small protective upgrades often cut recurring instability more effectively than reactive component swaps.

Another practical strategy is tiered maintenance. Not every line needs the same inspection intensity. A high-speed defect detection cell handling thousands of units per shift may justify weekly image baselining and stocked spare lights. A lower-volume station may only require monthly review. Matching maintenance intensity to risk level keeps cost under control while protecting quality outcomes.

Cross-functional ownership also matters. Machine vision systems perform best when quality, maintenance, controls, and safety teams share the same failure indicators. If only one department sees the logs, soft failures often persist too long. A short 10-minute weekly review of image samples, light condition, and fault events can prevent a much larger stoppage later.

What practical actions offer the fastest return?

- Establish baseline images during stable production and compare them routinely.

- Protect optics from direct contamination using shields, air purge, or better placement.

- Separate power and signal routing where electrical noise is likely.

- Use service-friendly mounts so cleaning and replacement take minutes, not hours.

- Monitor heat buildup inside enclosures, especially in summer or near furnaces and drives.

- Stock the components most likely to fail first: windows, lights, cables, and connectors.

These steps are useful because they target the highest-probability failure points first. For most harsh-environment machine vision systems, uptime improvements come from protecting stability at the edges of the system rather than treating the camera alone as the whole solution.

What should you clarify before upgrading, sourcing, or discussing a new project?

Before investing in new machine vision systems, start with a short list of operational facts. What is the actual contaminant type: dry dust, oil mist, condensation, or chemical washdown? What are the temperature highs and lows over a 24-hour cycle? How much vibration or cable movement occurs? What is the cost of one false accept compared with one false reject? These answers shape the right system architecture far more than generic product brochures do.

It is also important to define inspection criticality. A packaging verification station, a dimensional measurement cell, and a safety-related detection point do not carry the same tolerance for image drift. If the application is safety-sensitive, response time, repeatability, and fault handling must be reviewed carefully. If the application is quality-focused, the priority may be measurement repeatability, defect contrast, and cleaning interval stability over a full shift.

For international sourcing and supplier evaluation, teams should also clarify delivery and support details early. Typical questions include sample evaluation options, integration lead time, spare availability, customization scope, replacement cable lead times, and whether environmental adaptations can be preconfigured before shipment. These project details affect real deployment success just as much as core imaging performance.

Why choose us for machine vision systems research and supplier evaluation?

TradeNexus Pro helps procurement leaders, quality teams, and safety decision-makers move beyond surface-level vendor comparison. Our industry coverage connects machine vision systems decisions to the broader realities of advanced manufacturing, smart electronics, healthcare technology, green energy equipment, and supply chain digitalization. That means you can evaluate not only component suitability, but also sourcing resilience, implementation risk, and cross-border supplier fit.

If you are reviewing a harsh-environment vision project, you can contact us to discuss parameter confirmation, product selection logic, likely delivery cycles, customization direction, environmental protection priorities, sample support expectations, and quotation communication structure. This is especially useful when your team needs to compare several technical paths without wasting time on poorly matched offers.

If you want a more informed next step, reach out with your application scene, contamination type, operating temperature range, inspection target, and preferred timeline. With those basics, the conversation around machine vision systems becomes faster, more practical, and much easier to convert into a sourcing or upgrade plan that protects both quality and safety.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.