Microgrid Controllers: What Changes Once Systems Scale Up

As distributed energy projects expand from single-site pilots to multi-asset networks, microgrid controllers face a very different level of complexity. For technical evaluators, the real question is no longer basic coordination, but how control architecture, interoperability, cybersecurity, and scalability perform under growing operational demands. This article examines what truly changes once systems scale up.

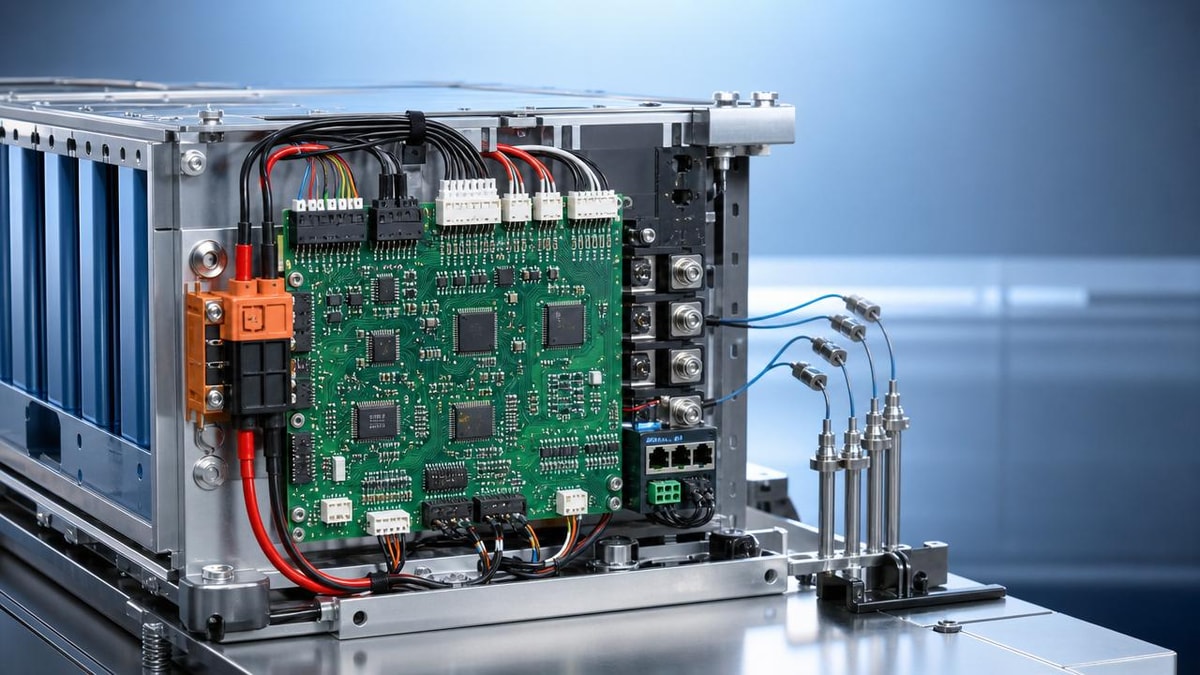

For most technical evaluators, the core answer is straightforward: once a microgrid moves beyond a contained pilot, the controller is no longer just an optimization tool. It becomes critical infrastructure for reliability, fleet orchestration, data governance, and cross-system decision-making. What matters at scale is not whether a controller can run a battery and a few DERs, but whether it can coordinate many assets, sites, vendors, and operating modes without creating hidden operational risk.

That shift changes how microgrid controllers should be evaluated. Features that look impressive in a demo environment may matter less than deterministic response, protocol flexibility, lifecycle maintainability, role-based access control, alarm management, and support for staged expansion. In practice, scaling exposes architectural weaknesses that rarely appear in smaller systems.

Why scaling up changes the evaluation criteria for microgrid controllers

At pilot scale, a microgrid controller often operates in a relatively predictable environment. There may be one site, a limited number of DERs, a small set of operating priorities, and a narrow stakeholder group. Under those conditions, even a controller with limited abstraction layers or vendor-specific integrations can appear effective.

As systems scale, however, complexity increases in several dimensions at once. More assets mean more control points, more telemetry streams, more exceptions, and more interdependencies. Multi-site deployment introduces differing load profiles, local grid constraints, tariff structures, regulatory conditions, and cybersecurity boundaries. The controller must do more than dispatch power. It must maintain coordinated situational awareness across a larger and more dynamic operating envelope.

For technical assessment teams, this means the selection process should shift from feature comparison to systems engineering analysis. The real question becomes whether the controller architecture can absorb complexity without degrading response speed, operational clarity, or integration reliability. A platform that works well at one campus can struggle when extended across industrial parks, remote assets, or mixed portfolios with solar, storage, CHP, EV charging, and flexible loads.

This is also where scalability stops being a marketing term and becomes an engineering requirement. Evaluators need evidence that growth in sites, assets, and use cases does not force a complete redesign of the controls layer. If every expansion requires custom code, manual intervention, or new middleware, the platform may become too expensive and fragile to support long-term rollout.

What actually becomes harder when a microgrid grows

The first challenge is operational coordination. A larger microgrid or portfolio must handle more simultaneous decisions: when to charge or discharge storage, when to curtail loads, how to prioritize resilience versus economics, how to coordinate onsite generation with utility signals, and how to respond to faults without causing cascading issues. Control logic becomes multidimensional rather than linear.

The second challenge is time sensitivity. At scale, some decisions remain strategic and slow, such as day-ahead scheduling, while others become immediate and safety-critical, such as islanding transitions, frequency support, breaker status response, or black-start sequencing. A controller that mixes all logic at the same layer may struggle under pressure. Technical evaluators should examine whether the platform separates supervisory optimization from fast local control in a stable way.

The third challenge is exceptions management. Small systems can often be managed by experienced operators who understand site-specific behavior. Larger deployments produce many more edge cases: communication dropouts, partial asset availability, inverter firmware mismatches, data latency, sensor drift, conflicting schedules, or local manual overrides. Scaled systems need controllers that fail gracefully, preserve visibility, and support rapid diagnosis.

The fourth challenge is governance. Once multiple sites, teams, and vendors are involved, control decisions need traceability. Who changed a dispatch rule? Which version of the control logic is active at each site? How are alarms prioritized? Can operators simulate policy changes before deployment? These questions are central for enterprise-scale adoption, especially in regulated or mission-critical environments.

Control architecture matters more than feature count

One of the most common mistakes in evaluating microgrid controllers is overemphasizing the visible application layer while underestimating the underlying control architecture. At scale, architecture determines whether the system remains stable, maintainable, and interoperable as complexity grows.

Technical evaluators should look closely at whether the platform supports hierarchical or distributed control. In many scaled deployments, local controllers must continue executing essential functions even if site-to-cloud or site-to-central communications are lost. A central supervisory layer may optimize across sites, but it should not become a single point of operational failure. Resilience requires local autonomy for critical functions and coordinated oversight for broader optimization.

Modularity is equally important. A controller designed with clean separation between data acquisition, optimization, rule execution, forecasting, historian functions, and user interfaces will typically scale better than a monolithic application. Modular design supports incremental upgrades, better testing, and easier onboarding of new assets or software components.

Another architectural issue is model extensibility. Early-stage systems may be built around a narrow set of predefined asset classes, but larger projects often add technologies over time. If the controller cannot easily represent new asset behaviors, control priorities, or market participation modes, future expansion becomes constrained. Evaluators should ask not only what the platform supports today, but how new functions are introduced and validated.

Interoperability becomes a long-term cost and risk issue

In scaled environments, interoperability is not a convenience feature. It is a primary determinant of lifecycle cost, deployment speed, and operational flexibility. Most large microgrids do not consist of equipment from a single vendor. They include inverters, BESS systems, relays, meters, building controls, SCADA elements, protection devices, and utility interfaces from multiple manufacturers, often added over several phases.

For this reason, technical evaluators should pay close attention to protocol support, semantic mapping, and data normalization. Native compatibility with standards such as Modbus, DNP3, IEC 61850, OPC UA, BACnet, or SunSpec can reduce integration friction, but protocol availability alone is not enough. The controller also needs a consistent internal model for asset states, commands, alarms, and timestamps. Without that, integration remains brittle even when the transport protocol is supported.

Vendor lock-in risk also rises as systems scale. A controller that depends heavily on custom connectors or proprietary data handling may create long-term dependency on the original integrator. That can slow expansion, complicate maintenance, and weaken procurement leverage. From a strategic standpoint, open integration practices and documented APIs are often more valuable than a broad but opaque list of supported devices.

Evaluators should also examine commissioning workflows. How much engineering effort is required to add a new battery system or solar inverter model? Can templates be reused across sites? Are digital twins or test environments available before field deployment? Interoperability should be judged by repeatability, not just initial success.

Cybersecurity stops being an IT checkbox

At small scale, cybersecurity discussions are often deferred or treated as a standard networking issue. At larger scale, that approach becomes dangerous. A microgrid controller increasingly sits at the intersection of operational technology, enterprise systems, remote access channels, and external market or utility interfaces. This makes it a high-value target and a potential pathway for broader operational disruption.

For technical evaluators, cybersecurity review should focus on architecture and operations, not just compliance language. Important questions include whether the controller supports network segmentation, secure remote access, role-based permissions, multi-factor authentication, encrypted communications, patch management workflows, and event logging suitable for forensic review.

It is also essential to understand how the platform handles third-party integration. Every external API, cloud connection, and vendor-maintenance channel expands the attack surface. As microgrids scale across multiple sites, identity management and credential governance become much harder. A secure design should reduce trust assumptions and support clear boundaries between operators, integrators, OEMs, and enterprise administrators.

Another key issue is cyber-resilience. If external communications are interrupted or a suspicious event is detected, can the local system continue operating safely? Can remote commands be restricted without losing local control? In scaled deployments, the best controller is not simply the one with the longest security feature list, but the one designed to preserve safe operations under degraded conditions.

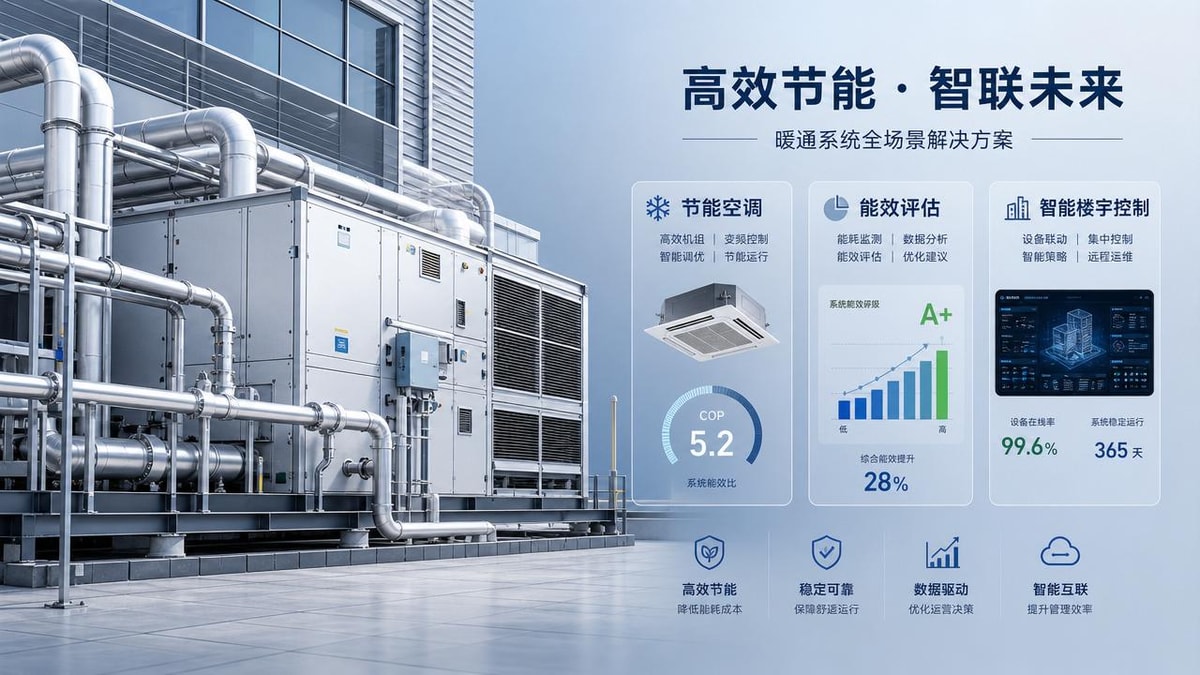

Data quality, observability, and analytics become central to performance

Many scaling problems are not caused by poor optimization logic. They are caused by inconsistent or low-quality data. As more assets and sites come online, timestamp alignment, telemetry granularity, naming conventions, alarm fidelity, and state estimation all become more important. A controller cannot make reliable decisions if the inputs are delayed, ambiguous, or incomplete.

Technical evaluators should therefore assess observability capabilities in depth. Can operators see which commands were issued, whether they were acknowledged, and why a control decision was made? Is there enough historical context to diagnose oscillations, dispatch anomalies, or recurring curtailment events? Can the system distinguish between communication failure, asset unavailability, and policy constraints?

Analytics maturity also matters more at scale. Single-site projects can often tolerate some manual analysis, but fleet-scale systems require structured reporting on performance, uptime, DER utilization, avoided energy cost, resilience events, and control exceptions. If the controller cannot expose usable data to enterprise analytics environments, value realization becomes harder to prove internally.

This is especially relevant for organizations trying to compare site performance or build standardized expansion models. A controller that produces clean, structured, accessible operational data can support both engineering improvement and executive decision-making. One that traps data in siloed interfaces creates friction across the organization.

Scalability should be tested in operational scenarios, not vendor slides

When vendors claim scalability, they often mean the software can technically connect to more devices or process more data points. For evaluators, that definition is too narrow. Real scalability means the controller can support more complexity while preserving reliability, usability, security, and maintainability.

A practical evaluation should include scenario-based testing. For example, what happens when a site loses connectivity to the central optimizer? How does the controller behave if two DER assets provide conflicting state information? Can the system execute islanding and resynchronization smoothly while fleet-level optimization is active elsewhere? How are alarms handled when dozens of assets issue status changes simultaneously?

Testing should also address upgrade and change management. Can control policies be versioned and rolled out selectively? Is there a rollback mechanism if a new rule set creates unintended behavior? Can operators validate changes in a sandbox before deployment? In scaled microgrid environments, software lifecycle discipline is as important as dispatch logic.

Another useful lens is staffing efficiency. As more sites are added, does the controller reduce operational burden or simply centralize it? A system that requires heavy expert intervention for every exception may not be truly scalable, even if the core algorithm is strong. Operational simplicity is not a secondary benefit. It is a scaling requirement.

How technical evaluators can make better decisions

For technical assessment teams, the most effective approach is to evaluate microgrid controllers against future-state operating requirements rather than current pilot conditions. This means documenting expected growth in asset diversity, site count, resilience needs, utility interactions, reporting needs, and cybersecurity obligations over a multi-year horizon.

From there, build an evaluation framework around six questions. First, can the control architecture separate local resilience functions from supervisory optimization? Second, how open and repeatable is the integration model? Third, what cybersecurity controls exist at both system and operations level? Fourth, how well does the platform support observability and root-cause analysis? Fifth, can configuration and policy changes be managed safely at scale? Sixth, what proof exists from comparable deployments, not just test environments?

Reference projects matter, but they should be interpreted carefully. A successful deployment in one sector or geography does not automatically validate fit elsewhere. Evaluators should look for evidence that the controller has handled similar asset mixes, communication constraints, and uptime expectations. The goal is not just to confirm that it works, but to understand how it behaves under operational stress.

It is also wise to involve multiple stakeholders early. Controls engineers, OT security teams, site operators, enterprise architects, and procurement leaders often evaluate different risk dimensions. Scaled microgrid success depends on aligning those perspectives before platform selection, not after integration problems emerge.

Conclusion: scaling exposes the true quality of a microgrid controller

Once a microgrid system scales up, the controller’s role changes fundamentally. It is no longer enough to coordinate a few distributed energy resources at one site. The platform must support multi-layer control, heterogeneous integration, cyber-resilient operations, structured data visibility, and repeatable expansion across changing conditions.

For technical evaluators, this means the best microgrid controllers are not necessarily those with the most features on paper. The strongest platforms are those built on sound architecture, open interoperability, operational transparency, and disciplined lifecycle management. In other words, scale rewards engineering depth, not presentation polish.

If there is one clear takeaway, it is this: scaling does not simply make the same control problem larger. It creates a different class of problem altogether. Teams that evaluate controllers through that lens are far more likely to choose systems that remain reliable, secure, and valuable as distributed energy portfolios grow.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.