Are Smart Factory Solutions Worth It for Mid-Sized Plants?

For mid-sized plants, investing in smart factory solutions can feel like a high-stakes decision between future readiness and budget control. The real question is not whether the technology sounds impressive, but whether it delivers measurable gains in efficiency, visibility, and resilience. This article examines the practical value, cost factors, and strategic trade-offs business evaluators should weigh before moving forward.

What do smart factory solutions actually include for a mid-sized plant?

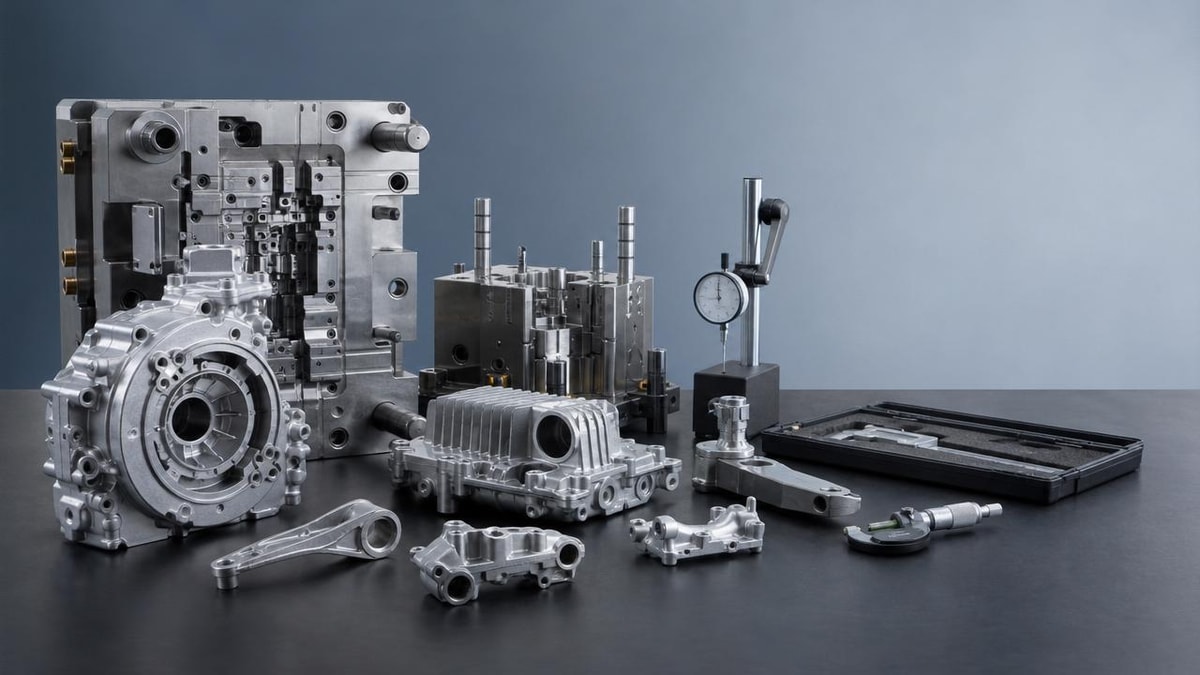

For business evaluators, smart factory solutions should not be reduced to a single software package or a fully automated “lights-out” operation. In most mid-sized plants, the term usually covers a connected stack of tools that improve production visibility, machine utilization, maintenance planning, quality tracking, and inventory coordination. A practical deployment often starts with 3 to 5 connected layers, such as sensors, machine data collection, production dashboards, quality analytics, and ERP or MES integration.

The reason these systems receive attention is simple: many mid-sized plants still rely on manual reporting cycles that run 1 shift, 1 day, or even 1 week behind actual shop-floor conditions. That delay makes it harder to react to downtime, scrap increases, late orders, or component shortages. Smart factory solutions aim to reduce that delay from hours or days to minutes, which changes how quickly supervisors and planners can act.

In a broad industrial environment, smart factory solutions are most valuable when they connect operational technology and business decision-making. That means machine alerts must lead to maintenance action, production bottleneck data must inform scheduling, and quality deviations must trigger corrective workflows. Without that operational loop, plants may collect data but still fail to improve output, cost, or service reliability.

Which functions are most common in real deployments?

A realistic smart factory roadmap for a mid-sized operation usually focuses on narrow, measurable use cases first. Instead of digitizing everything at once, many plants prioritize one production line, one utility-intensive process, or one high-scrap area. A phased rollout over 3 to 12 months is generally more manageable than a full-plant transformation launched in a single cycle.

- Machine monitoring for uptime, cycle time, alarms, and stoppage reasons.

- Digital production tracking for work-in-progress, takt adherence, and throughput visibility.

- Quality data capture tied to defects, rework causes, or lot traceability.

- Predictive or condition-based maintenance using vibration, temperature, or runtime thresholds.

- Energy and utility monitoring for compressed air, electricity, and process-intensive equipment.

Mid-sized plants should also distinguish between foundational and advanced capabilities. Foundational functions help create visibility and discipline. Advanced functions add optimization, forecasting, and autonomous decision support. Skipping the foundation often leads to poor return because dashboards cannot compensate for weak process control, inconsistent master data, or disconnected workflows.

A simple way to frame the scope

The table below helps evaluators separate “must-have now” capabilities from “nice-to-scale later” functions when assessing smart factory solutions.

For most mid-sized facilities, the strongest starting point is not maximum automation. It is targeted visibility around the 2 or 3 operational constraints that most often delay shipments, inflate cost, or create planning uncertainty. That is where smart factory solutions begin to show practical value.

Are smart factory solutions worth it for every mid-sized plant?

No, and that is an important answer for evaluators who need disciplined capital allocation. Smart factory solutions are usually worth it when a plant faces recurring loss patterns that manual management cannot fix efficiently. Common examples include chronic downtime, poor schedule adherence, quality variation across shifts, rising labor pressure, or weak traceability across production and warehousing.

A plant running stable, low-mix, low-volume production with predictable labor availability may not need broad deployment immediately. In contrast, a facility handling 50 to 500 SKUs, variable order patterns, or multi-step production routing is far more likely to benefit. The value increases when one disruption can affect customer service levels, expedite costs, or raw material exposure across several departments.

The right question is not “Is this modern?” but “Where is the decision lag hurting us?” If line supervisors wait until end-of-shift reports to discover scrap spikes, or if maintenance teams only respond after failure, the plant is likely paying hidden costs every week. Smart factory solutions become attractive when they shorten those response cycles and convert previously invisible losses into controllable actions.

What conditions usually justify investment?

Business evaluators can use a simple screening method before approving a deeper project review. If at least 3 of the following conditions are present, the plant probably has a valid business case worth quantifying.

- Unplanned downtime causes repeated schedule changes or overtime in more than 2 production areas.

- Production reporting is delayed by one shift or longer, limiting same-day intervention.

- Scrap, rework, or first-pass yield issues are tracked inconsistently across lines.

- Inventory accuracy or WIP visibility is too weak for reliable planning.

- Customer or compliance requirements increasingly demand traceability within hours, not days.

Plants that meet these conditions often recover value through a combination of 2% to 8% higher throughput, lower downtime exposure, tighter quality control, or reduced manual coordination time. Exact gains differ by process type, but the financial logic is usually strongest where lost capacity or quality escapes are already expensive.

Quick judgment guide for evaluators

The following table is useful during early-stage screening meetings when comparing whether smart factory solutions fit current plant conditions or should be deferred.

This kind of filtering prevents a common mistake: approving a broad digital program before proving that the operation has enough instability, complexity, or cost leakage to justify it. Smart factory solutions are most defensible when linked to a visible operational constraint, not when pursued as a generic modernization effort.

What costs, timelines, and ROI factors should business evaluators examine?

The cost of smart factory solutions depends less on branding and more on scope, integration depth, and process complexity. A small pilot with sensor retrofits and dashboarding may move within a 6- to 12-week timeline, while a multi-line deployment involving MES, ERP interfaces, and traceability workflows can extend to 6 to 12 months. Evaluators should separate initial enablement costs from recurring subscription, support, and change-management costs.

A common budgeting error is underestimating non-software work. Plants often need machine connectivity checks, network segmentation, data mapping, operator training, process redesign, and internal ownership. In practical terms, the internal effort can consume as much attention as the external technology stack during the first 90 to 180 days.

ROI should be modeled around a limited set of measurable drivers. For mid-sized plants, the most credible categories are reduced downtime hours, lower scrap percentage, better labor utilization, improved schedule adherence, and fewer urgent interventions. If a project team cannot define a baseline for at least 3 measurable drivers, the business case is still too vague.

Which cost elements are often missed?

Early proposals often make smart factory solutions appear less expensive than they will be in practice because several supporting items are omitted. Evaluators should request a total-cost view covering the first 12 to 24 months, not just initial licensing or hardware.

- Connectivity adaptation for legacy machines that do not expose standardized data easily.

- Cybersecurity hardening, access control, and network policy updates for connected assets.

- Operator and supervisor training across all shifts, not only day-shift leadership.

- Internal process redesign so alerts, dashboards, and workflows actually trigger action.

- Ongoing data governance for naming conventions, event coding, and KPI ownership.

Many mid-sized plants target payback windows in the range of 12 to 24 months for phase-one investments. That threshold is not universal, but it is a useful benchmark. If expected value depends on too many soft assumptions or requires perfect adoption across all functions from day one, the ROI case is probably overstated.

What does a practical ROI lens look like?

A disciplined evaluator can test a proposal by matching each solution component to a clear operational metric, ownership function, and review period.

This framework helps evaluators avoid vague promises. If the supplier or project team cannot connect smart factory solutions to operational metrics that finance and plant leadership both recognize, the proposal needs further refinement before approval.

What are the biggest risks and misconceptions before implementation?

One of the biggest misconceptions is that more data automatically produces better decisions. In reality, smart factory solutions only create value when data is clean enough, timely enough, and tied to defined actions. Plants that add dashboards without assigning response rules often end up with more screens but little operational improvement.

Another risk is trying to digitize a broken process without stabilizing it first. If downtime coding is inconsistent, quality checks vary by operator, or work instructions are not standardized, the system may simply capture inconsistent inputs faster. That weakens trust in the project and can delay adoption after the first 60 to 90 days.

Cybersecurity and system governance also matter more than many plants expect. Connected assets expand the digital attack surface and create responsibility around user permissions, patching, network segmentation, and vendor access. Business evaluators should verify that implementation planning includes IT and OT coordination rather than leaving security for a later phase.

Which warning signs should trigger caution?

Before approving smart factory solutions, evaluators should examine whether the project is being driven by a defined business problem or by technology enthusiasm alone. Several warning signs suggest weak readiness.

- No agreed baseline exists for OEE, scrap, downtime, or traceability performance.

- Plant leadership expects full-plant transformation in under 3 months.

- The proposal relies heavily on future AI benefits before foundational data is stable.

- Operations, maintenance, quality, and IT have not aligned on ownership roles.

- There is no plan for training temporary staff, night shift, or new line supervisors.

These risks do not mean the investment should stop. They mean the plant may need a narrower first phase. Many successful programs begin with one line, one cell, or one utility system because that creates a manageable proof point. Once users see measurable gains over 8 to 16 weeks, organizational support tends to improve.

How can risk be reduced without slowing progress too much?

The most effective way to de-risk smart factory solutions is to stage the rollout in controlled steps. First define the operational pain point, then validate data capture, then train users, and only after that scale integration. This sequence is slower than a marketing demo but usually faster than recovering from a failed full-scope launch.

Business evaluators should also require exit criteria for each phase. For example, phase one may need 95% data capture reliability, under 15-minute dashboard delay, or a documented reduction in manual reporting time before additional budget is released. That protects capital while keeping the project commercially grounded.

How should a mid-sized plant choose the right smart factory solutions?

Selection should begin with operational fit, not feature volume. The best smart factory solutions for a mid-sized plant are usually those that can integrate with existing equipment, support phased deployment, and present KPIs clearly to plant teams who need action-oriented visibility. A solution that is technically impressive but too complex for line-level adoption often struggles to generate sustained return.

Evaluators should map supplier proposals against current-state constraints. How many machine types need to connect? How many shifts need to use the system? Does the plant need lot traceability, recipe control, maintenance alerts, or all of them? The more precisely these needs are defined, the easier it becomes to compare vendors and avoid overbuying.

It is also wise to examine scalability in realistic terms. A plant may begin with 20 assets and expand to 80 within 18 months. If licensing, connectivity, or support costs rise sharply at that point, the initial proposal may be less attractive than it first appears. Mid-sized plants should ask not only “Can this work now?” but also “Can this expand without operational disruption?”

What questions should evaluators ask vendors or solution partners?

A structured discussion can reveal whether a provider understands mid-market realities or is simply pushing a standard enterprise package. The following checklist helps keep evaluation focused on execution, cost control, and risk visibility.

- Which machine protocols and legacy environments can be supported without major custom development?

- What is the expected timeline for pilot deployment, user training, and first KPI reporting?

- How is data accuracy validated during the first 30 to 60 days?

- What internal roles must the plant assign for operations, IT, quality, and maintenance ownership?

- How are cybersecurity responsibilities divided between the provider and the plant?

- What expansion path is recommended after the first proof-of-value stage?

Strong selection processes also include a use-case demonstration tied to plant conditions. Generic dashboards are not enough. Evaluators should ask to see how the smart factory solutions would handle an actual downtime event, a quality deviation, or a traceability query. Practical workflow fit matters more than polished interface design.

What is a sensible final decision framework?

Before approval, a mid-sized plant should be able to answer five final questions clearly: What problem are we solving first? What metric will prove success? What is the phase-one timeline? What total 12- to 24-month cost should we expect? Who owns daily use after go-live? If any of these remain unclear, the decision is not yet mature.

In many cases, smart factory solutions are worth it not because they promise a futuristic plant, but because they make ordinary operations more visible, predictable, and resilient. For business evaluators, that is the true investment logic: better control of existing complexity, not technology for its own sake.

What should you confirm before requesting proposals or moving to supplier discussions?

Before entering formal sourcing or supplier comparison, gather a short internal decision pack. It should include current operational pain points, machine inventory, integration requirements, target KPIs, expected timeline, and governance roles. Even a 2- to 4-page internal brief can sharply improve proposal quality and reduce mismatch in scope.

For companies evaluating smart factory solutions across multiple sectors or mixed production environments, outside market intelligence can also help. Comparative insight into vendor positioning, deployment patterns, and common implementation trade-offs is especially useful when procurement, operations, and IT do not yet share the same decision criteria.

At TradeNexus Pro, we support enterprise decision-makers who need a clearer view of industrial technologies, supply-side capabilities, and strategic fit across advanced manufacturing and related sectors. If you are assessing smart factory solutions, the most productive next step is to clarify scope before discussing price alone.

Why contact us?

We help business evaluators and sourcing teams frame the right commercial and technical questions before supplier engagement. That includes support around parameter confirmation, solution selection logic, realistic deployment cycles, integration priorities, and quotation comparison across multiple vendors or deployment models.

If you need to review implementation scope, compare phased versus full deployment, confirm delivery timelines, or discuss custom requirements such as traceability, machine connectivity, or reporting depth, contact us for a more structured evaluation path. Clear early-stage alignment often saves far more than it costs in later rework, overbuying, or delayed ROI.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.