What Slows ROI After Installing Collaborative Robots?

Installing collaborative robots does not guarantee fast returns. For financial decision-makers, ROI often slows when hidden integration costs, weak process redesign, underused capacity, and unclear performance metrics are ignored. This article explains why collaborative robots may take longer to pay back than expected and what leaders can do to protect capital efficiency, improve deployment outcomes, and turn automation investments into measurable business value.

For CFOs, procurement leaders, and capital approval teams, the issue is rarely whether collaborative robots can work. The real question is how quickly they can create measurable cash impact after installation. In many factories, warehouses, electronics assembly cells, healthcare device lines, and packaging operations, the first 3 to 12 months determine whether automation strengthens margins or becomes a budget drag.

A collaborative robot project often looks attractive at the equipment quotation stage, yet total business performance depends on cycle time, uptime, labor reallocation, scrap reduction, training adoption, and data visibility. When these variables are underestimated, payback can stretch from an expected 12 months to 24 months or more. That gap matters to any financial approver managing capital discipline across multiple operational priorities.

Hidden Costs That Delay Collaborative Robot Payback

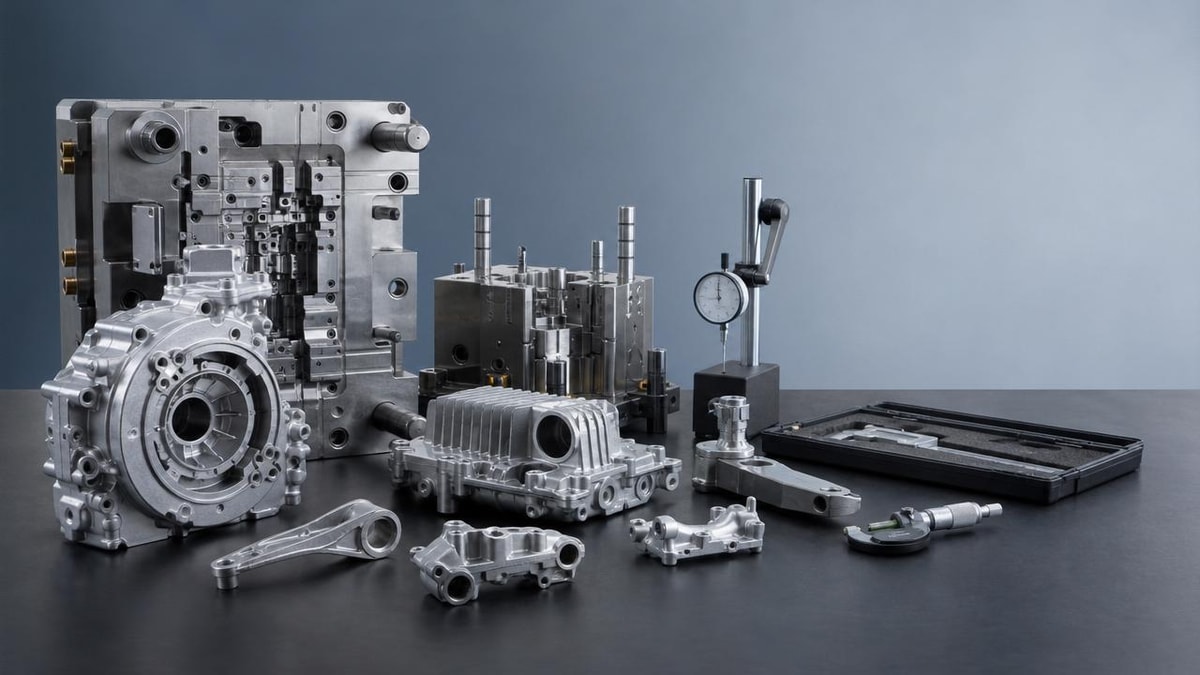

The purchase price of collaborative robots is only one part of the investment. In many deployments, the robot arm represents 35% to 55% of the total project cost once end-of-arm tooling, safety review, fixturing, software configuration, conveyors, sensors, vision systems, and integration labor are included. Financial teams that approve capital based only on the base unit price often receive a distorted ROI forecast.

Another common issue is underestimating downtime during commissioning. Even a relatively simple pick-and-place or screwdriving application may require 2 to 6 weeks of setup, testing, and adjustment before stable throughput is reached. If production interruption is not modeled into the business case, the return curve starts later than expected, especially in operations with high daily output targets or limited shift flexibility.

Support costs also accumulate after go-live. Operators may need 8 to 20 hours of initial training, supervisors need exception-handling guidance, and maintenance teams need spare parts planning. In sectors such as smart electronics and healthcare technology, validation requirements can add further review cycles. These costs are not always large individually, but together they materially slow the realized value of collaborative robots.

Where budget assumptions usually break

Finance teams can protect capital efficiency by separating visible and invisible cost layers before approval. A robust total-cost view should include direct equipment, process engineering, digital integration, labor transition, and ramp-up loss. This is particularly relevant in multi-site enterprises where one underestimated project can distort capital benchmarking for the next 2 or 3 automation approvals.

- Base robot and controller cost, usually the easiest item to quote and compare.

- Peripheral components such as grippers, vision, feeders, guarding modifications, and fixtures.

- Engineering and integration effort, including PLC or MES connection, testing, and debugging.

- Training, change management, preventive maintenance planning, and spare parts stocking.

The table below shows a practical budgeting framework that finance approvers can use to challenge overly optimistic assumptions before authorizing collaborative robots.

The key financial takeaway is simple: collaborative robots do not underperform only because the technology is weak. They underperform when the cost envelope is incomplete. A stronger approval model treats deployment as a system investment, not a standalone asset purchase.

Weak Process Redesign Is One of the Biggest ROI Killers

Many companies install collaborative robots into processes that were originally built around human flexibility, informal workarounds, and variable material flow. If the surrounding workflow stays unchanged, the robot simply automates a broken sequence. The result is a more expensive version of the old process, not a more profitable one.

This problem is especially visible in advanced manufacturing and smart electronics, where small inconsistencies in part presentation, tray orientation, or torque sequence can reduce robot efficiency by 10% to 25%. In healthcare technology production, validation rules may restrict on-the-fly adjustments, making poor initial process design even more expensive after installation.

Financial decision-makers should ask whether the team redesigned the process around automation, not whether a collaborative robot can technically perform the task. Questions about takt time, feeder logic, buffer sizing, rework handling, and shift utilization matter more to ROI than promotional claims about ease of deployment.

Operational signs that process design is limiting returns

A collaborative robot often misses ROI targets when operators still spend too much time loading parts, clearing jams, confirming quality manually, or moving work-in-progress between disconnected stations. These hidden handling steps reduce automation density and prevent the business from converting technical capability into financial output.

Four process checks before capital approval

- Confirm that input parts arrive with stable orientation and tolerances, ideally with defect rates low enough to avoid constant exception handling.

- Map the complete labor content per cycle, including walking, inspection, rework, and waiting time, not just direct touch time.

- Verify whether upstream and downstream equipment can sustain the same throughput for at least 1.2x projected robot pace.

- Model failure modes such as feeder empty events, sensor faults, and product variation before commissioning begins.

When process redesign is handled early, collaborative robots are more likely to reduce labor cost per unit, improve consistency, and free staff for higher-value roles. When redesign is skipped, the robot becomes dependent on human intervention, and the approved payback logic starts to erode from day one.

Underused Capacity Turns Good Technology Into Poor Capital Performance

A frequent reason ROI slows after installing collaborative robots is that the asset is not used enough hours per day. A robot approved on a two-shift utilization model but operated for only one shift can lose 30% to 50% of its expected annual value contribution. For finance teams, utilization risk is often more important than nominal labor savings.

This issue appears in seasonal operations, pilot lines, mixed-model production, and plants with unstable order volumes. In supply chain and packaging environments, the robot may sit idle when product mix changes faster than the cell can be reconfigured. In electronics and medical device assembly, limited SKU standardization can produce the same result.

Collaborative robots usually create the strongest economics when they support repetitive work with consistent demand, stable part presentation, and at least 12 to 16 productive hours per day. If expected utilization falls below that level, the business case should either be revised or the deployment model should be broadened to include multiple tasks across the week.

A simple utilization screen for finance reviewers

Before approval, it helps to compare planned operating reality with projected operating assumptions. The table below highlights common utilization gaps that slow returns from collaborative robots.

The practical conclusion is that collaborative robots should be evaluated like productive capacity, not like a static piece of equipment. Higher utilization compresses payback. Low utilization extends it, even when technical performance is acceptable.

For multi-site organizations, one of the best ways to improve returns is to standardize a robot cell architecture that can be replicated across 3 to 5 similar processes. Shared tooling logic, reusable programs, and common training materials reduce both deployment time and idle risk.

Poor KPI Design Makes ROI Hard to Prove and Harder to Improve

Some collaborative robot projects fail financially not because returns are absent, but because returns are poorly measured. If the only metric tracked is labor headcount reduction, the business misses quality gains, throughput stability, overtime avoidance, ergonomic improvement, and scrap reduction. In capital review meetings, unmeasured value often becomes invisible value.

A weak KPI framework also creates ownership confusion. Operations may focus on uptime, engineering may focus on technical completion, and finance may focus on payback. Without a shared scorecard, the project can be declared successful at the plant level while still underperforming on capital efficiency. This disconnect is common in the first 90 to 180 days after installation.

The most useful KPI sets combine pre-installation baselines with post-installation monitoring. That means comparing output per hour, first-pass yield, labor minutes per unit, overtime cost, downtime minutes, and rework frequency over the same product family. Collaborative robots should be judged against a disciplined baseline, not a rough estimate built from memory.

Metrics finance teams should require

A practical KPI structure should capture both direct and indirect return drivers. It should also define when the project moves from ramp-up mode to normal operating mode, typically after 30, 60, or 90 days, depending on process complexity.

- Throughput: units per hour before and after collaborative robot deployment.

- Labor efficiency: direct labor minutes per unit and redeployed labor hours per shift.

- Quality: first-pass yield, defect escapes, and rework incidents per 1,000 units.

- Reliability: uptime percentage, mean time between stoppages, and average recovery time.

- Financial impact: overtime reduction, scrap cost reduction, and payback progress by quarter.

When these KPIs are reviewed monthly, leaders can detect whether collaborative robots need process refinement, tooling changes, or scheduling adjustments. Strong measurement not only proves ROI but accelerates it by exposing where value leakage is happening.

How to Build a Faster and More Defensible Business Case

For financial approvers, the goal is not to reject collaborative robots. It is to approve them under conditions that increase the probability of a timely and measurable return. A stronger business case begins with application fit. Repetitive tasks with stable volumes, moderate precision requirements, and recurring labor pressure usually offer the best first-wave opportunities.

Decision-makers should also compare full-scope deployment models instead of isolated equipment bids. The lowest upfront quote may lead to the highest total cost if it excludes integration accountability, training support, post-launch optimization, or performance review. In many cases, a project that costs 10% more upfront can reach stable output 2 to 4 months earlier and therefore create better financial results.

A disciplined approval process usually includes a phased gate structure. Phase 1 validates the process and baseline data. Phase 2 confirms the technical solution and total cost. Phase 3 reviews ramp-up performance and actual KPI movement. This 3-stage approach improves control without slowing good projects unnecessarily.

Recommended procurement and approval checklist

The following checklist can help enterprise buyers and finance teams strengthen approval quality for collaborative robots across manufacturing, electronics, healthcare technology, and related industrial applications.

- Define the exact task scope, cycle target, shift pattern, and exception frequency before requesting quotations.

- Request total project costing, including tooling, integration, software, training, and the first 12 months of support.

- Validate process readiness through a trial, simulation, or pilot run using real parts and realistic throughput assumptions.

- Set 5 to 7 KPIs with baseline values, target ranges, and review dates at 30, 60, and 90 days after launch.

- Plan labor redeployment explicitly so savings are captured rather than absorbed into operational ambiguity.

In capital-intensive sectors, this approach supports faster internal alignment between operations, engineering, procurement, and finance. It also helps enterprises avoid approving collaborative robots for low-volume or unstable processes where the economics are weak from the start.

FAQ for financial decision-makers

How long should collaborative robot payback usually take?

In many industrial settings, a realistic payback window falls between 12 and 24 months, depending on utilization, labor substitution, and integration complexity. Projects promising very short returns should be tested against downtime, training, and changeover assumptions before approval.

What types of tasks usually create the best returns?

Tasks with repeatable motion, stable parts, and consistent daily demand usually perform best. Examples include pick-and-place, packaging assistance, screwdriving, inspection handling, and machine tending. Highly variable artisan work or low-volume, high-mix tasks often require a more cautious business case.

What is the biggest budgeting mistake?

The most common mistake is treating the robot purchase as the main cost and ignoring the 45% to 65% of project effort that may sit in tooling, integration, process redesign, and ramp-up. This creates a payback model that looks attractive in procurement but weakens rapidly after installation.

Collaborative robots can absolutely create strong returns, but only when the investment is evaluated as an operational system rather than a standalone machine. Hidden costs, weak process redesign, low utilization, and poor KPI discipline are the four most common reasons ROI slows after installation. Each of these risks can be identified before capital is committed.

For enterprise buyers and finance leaders, the most effective strategy is to combine realistic cost modeling, application-fit screening, phased deployment control, and post-launch measurement. That approach improves forecast accuracy and makes automation spending more defensible across business units and budget cycles.

If your team is evaluating collaborative robots across advanced manufacturing, smart electronics, healthcare technology, or supply chain operations, TradeNexus Pro can help you assess deployment logic, supplier options, and capital risk with deeper market intelligence. Contact us to explore tailored insights, compare solution pathways, and identify automation opportunities that protect ROI from day one.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.