Why Digital Twin Manufacturing Projects Stall After Modeling

Many digital twin manufacturing initiatives look promising at the modeling stage, yet lose momentum when teams face integration gaps, unclear ownership, and weak ROI alignment. For project leaders, the real challenge is not building the model but turning it into an operational decision tool. This article explores why progress stalls after modeling and what managers can do to move from concept to measurable plant-level value.

Why stalling after modeling is becoming a visible industry pattern

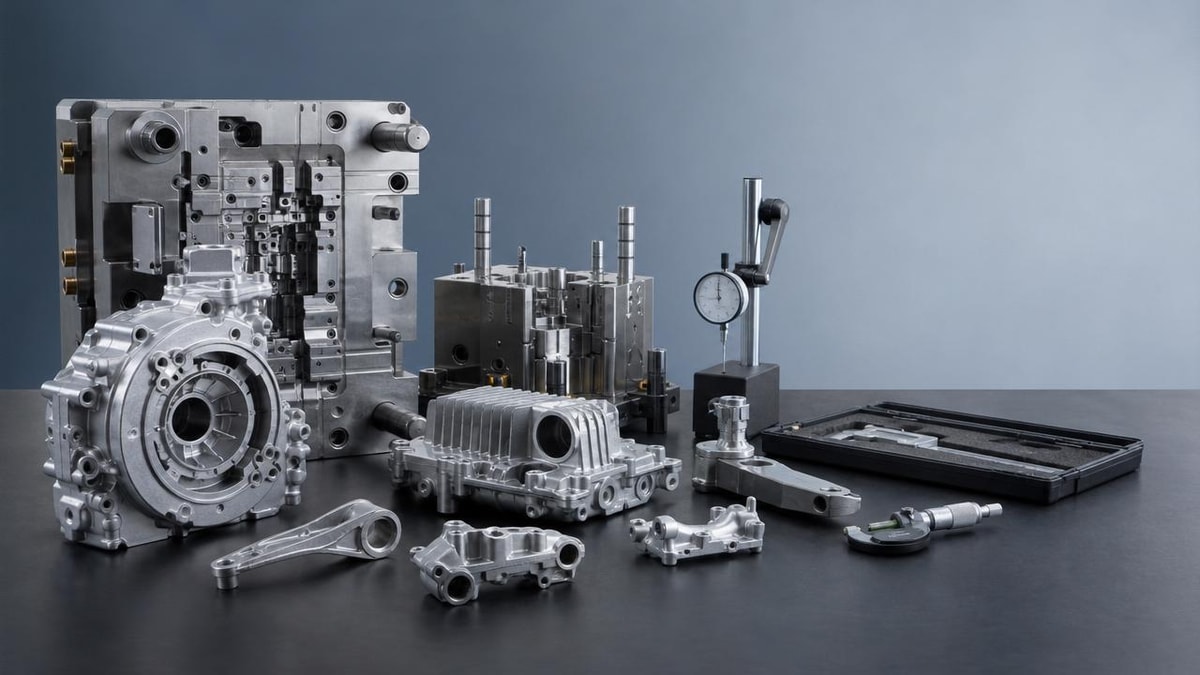

Across advanced production environments, digital twin manufacturing has shifted from a pilot-stage innovation topic to a board-level operations discussion. Over the last 3 to 5 years, many companies have invested in 3D models, simulation layers, and equipment dashboards, expecting faster planning, lower downtime, and more resilient output. Yet a recurring signal has emerged: the model is completed, the demo works, but plant teams do not use it consistently in daily decisions.

This pattern matters because the business case for digital twin manufacturing is increasingly tied to execution speed rather than visualization quality. In multi-site operations, project leaders are being asked to show value within 6 to 12 months, not in an open-ended transformation cycle. If the twin cannot support scheduling, maintenance, throughput analysis, energy optimization, or changeover planning, it stays trapped as a technical asset instead of becoming an operational system.

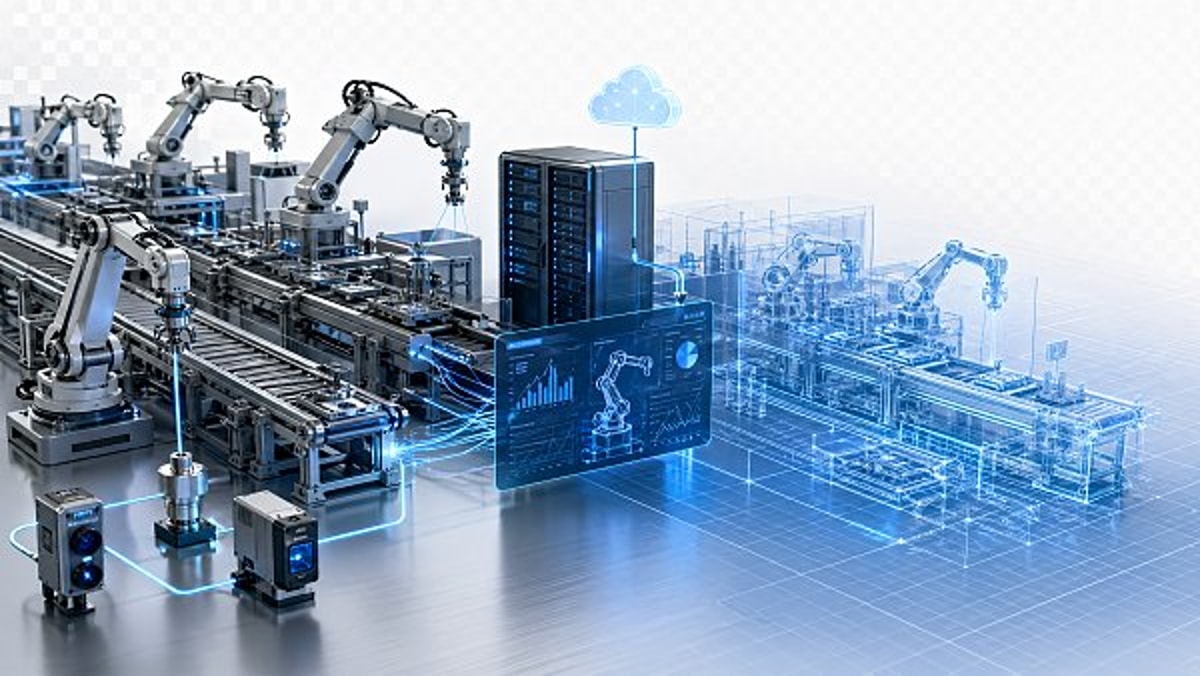

The trend is especially relevant in sectors with mixed legacy infrastructure, contract manufacturing pressures, and volatile supply chain conditions. In these settings, managers do not need another digital layer alone. They need a reliable decision environment that connects engineering models with live plant behavior, ERP signals, MES records, and maintenance workflows at a frequency that often ranges from near-real-time to daily batch updates.

What has changed in project expectations

Earlier programs often treated digital twins as a design or innovation initiative. Today, executive teams expect deployment outcomes tied to OEE improvement, scrap reduction, lead-time compression, and energy efficiency. That shift raises the implementation bar. A visually accurate model is no longer enough if it cannot support production decisions across 2 to 4 critical workflows, such as asset utilization, bottleneck tracing, maintenance forecasting, or line balancing.

At the same time, procurement and project governance have become stricter. Technology budgets are under review, and cross-functional sponsors want measurable adoption milestones by quarter. This means digital twin manufacturing projects are now judged less by technical novelty and more by whether operators, planners, reliability engineers, and plant managers can use outputs without constant analyst support.

For project management leaders, the implication is clear: stalling after modeling is not a rare implementation error. It is a structural risk that grows when the operating model, data architecture, and ownership model are designed after the model instead of before it.

The following table summarizes the common shift in digital twin manufacturing expectations now seen across industrial programs.

The table highlights a key change in market direction. What used to be accepted as a successful pilot is now considered incomplete unless it influences plant decisions, resource allocation, or measurable operating performance.

The real reasons digital twin manufacturing loses momentum after the model is built

The most common cause of stalled progress is not poor software alone. It is a mismatch between the twin’s technical design and the plant’s operational logic. Many teams build a sophisticated representation of assets, but fail to define how often data must refresh, who validates exceptions, and which business decisions the twin should improve. Without those rules, the model remains informative but not actionable.

A second cause is fragmented data readiness. In practice, digital twin manufacturing depends on a chain of connected systems: PLC or sensor data, historian records, MES transactions, maintenance logs, quality events, and ERP planning signals. If even 1 or 2 of these sources are inconsistent, delayed, or incomplete, the twin starts to drift from real conditions. Once users notice the gap, trust drops quickly, and adoption often falls within a few operating cycles.

A third issue is ownership ambiguity. Engineering may own the model, IT may own the integration stack, and operations may be expected to use outputs without controlling the logic behind them. When no single leader is responsible for business adoption, issue prioritization slows down. Questions that should be resolved within 48 to 72 hours can sit for weeks, particularly when each function assumes another team is accountable.

Where projects usually break between pilot and scale

The break often happens at the handoff from model validation to workflow integration. During the pilot, project teams can tolerate manual data uploads, analyst support, and narrow use cases. At scale, those workarounds fail. A twin that needs frequent manual correction cannot support 24/7 production environments, multi-shift decision-making, or network-wide deployment across several sites.

Another break point is scenario relevance. Some twins are built around what the technology can simulate rather than what plant teams need to decide each day. If the twin can show airflow, machine motion, or asset geometry but cannot answer practical questions about buffer levels, changeover time, preventive maintenance windows, or line constraints, operational teams stop engaging.

The final break point is ROI framing. When value is defined too broadly, such as “improve visibility” or “support smart factory goals,” the project loses urgency. Project leaders need 3 to 5 narrow economic targets, each linked to a baseline, review cadence, and owner. Without that structure, digital twin manufacturing becomes difficult to defend when budgets tighten.

High-friction barriers managers should test early

- Data latency exceeds the use case requirement, such as 24-hour refresh for a problem that needs 15-minute visibility.

- Asset naming conventions differ between engineering files, MES records, and maintenance systems, blocking reliable mapping.

- No formal owner exists for model accuracy after commissioning, leading to silent drift over 3 to 6 months.

- Operators and planners are users in theory but were not involved in workflow design, dashboard logic, or alert thresholds.

- Success is measured at the platform level rather than by plant-level outcomes such as downtime hours, yield variance, or energy intensity.

These barriers are rarely dramatic on day one, which is why many projects appear healthy until the first scaling phase. By then, the cost of redesign is much higher than the cost of early governance planning.

The strongest market drivers behind this shift in success and failure

Several industry forces are changing how digital twin manufacturing must be evaluated. The first is supply chain volatility. Plants now face more frequent schedule changes, supplier variability, and demand swings than many did five years ago. Under these conditions, a twin must help teams assess scenarios fast, often within a planning window of a few hours to a few days, instead of serving as a static engineering reference.

The second driver is the convergence of operational efficiency and sustainability targets. Manufacturers are under pressure to reduce waste, idle energy load, and unplanned downtime at the same time. This raises demand for digital twins that can connect process behavior with energy usage, maintenance cycles, and throughput constraints rather than treating them as separate reporting streams.

The third driver is the maturing software ecosystem. As more vendors offer simulation, IoT, analytics, and visualization tools, the challenge has shifted from access to technology toward interoperability and decision fit. Project leaders are now expected to choose architectures that can survive platform changes, site differences, and phased rollout over 12 to 24 months.

How these drivers change implementation priorities

When volatility is high, the value of digital twin manufacturing rises only if the twin helps teams answer operational questions faster than traditional planning methods. That means project scope should prioritize a small number of high-value scenarios rather than broad model complexity. In many plants, 2 or 3 reliable decision use cases outperform a large model with weak workflow integration.

When sustainability targets matter, project leaders should avoid isolating the twin inside engineering or innovation teams. Energy, reliability, and process teams need shared metrics. For example, if a line balancing change reduces bottlenecks but raises peak energy demand beyond acceptable thresholds, the twin should reveal that tradeoff before deployment.

When technology stacks are evolving, modularity becomes a strategic requirement. A twin that depends on one-off custom interfaces may work in a single line, but scaling to 5, 10, or 20 production cells becomes costly. This is why more organizations are asking about data standards, API maturity, source system mapping, and ongoing maintenance effort at the procurement stage.

The table below outlines the main drivers shaping current digital twin manufacturing project outcomes and what they mean for implementation planning.

For project leaders, these drivers are useful because they show why technical completion no longer guarantees business adoption. The surrounding industrial context has changed, and the implementation model must change with it.

Who feels the impact most and where decision risk concentrates

Not every stakeholder experiences a stalled digital twin manufacturing project in the same way. Engineering teams may see the initiative as technically successful because the model behaves as designed. Operations teams may see it as incomplete because it does not reduce decision friction on the shop floor. Finance teams may see it as underperforming because promised savings remain indirect or delayed beyond the original investment window.

The highest decision risk usually sits with project managers, plant transformation leads, and operations sponsors. They are responsible for translating technical capability into plant behavior, often under fixed timeline pressure. If the program misses adoption targets after 2 or 3 review cycles, they must justify either additional spending or a narrowed scope, both of which can affect future digital investments.

This is why stakeholder mapping is not an administrative task. It is a delivery control mechanism. Each user group needs a specific value path, usage frequency, and escalation route. A maintenance engineer may need weekly health and anomaly views, while a production planner may need shift-by-shift scenario comparisons. If both are given the same generic interface, engagement drops.

Impact by role in a typical industrial program

In broad manufacturing environments, the impact of post-modeling delays is often uneven but predictable. Operational roles feel the pain first because they live with the consequences of incomplete integration. Strategic roles feel it later when expected scale benefits fail to appear across the network.

The table below can help project leaders assess where intervention is most urgent before digital twin manufacturing credibility weakens across the organization.

This role-based view shows why project recovery needs more than technical debugging. It requires targeted intervention by user group, with priorities aligned to how each function measures value and risk.

Signals that indicate a project is drifting

- The twin is reviewed mainly in monthly meetings, but not during shift planning or maintenance coordination.

- Data exceptions are escalating, yet no service-level target exists for correction within 24 to 48 hours.

- Users request exports to spreadsheets because they do not trust dashboard assumptions.

- Expansion to the next line or site is delayed because the first deployment still depends on specialist support.

These are early warning signs. If addressed within the first 90 days after go-live, many programs can still recover momentum before the organization labels the initiative as experimental or nonessential.

What project leaders should do differently to move from model to measurable value

The most effective response is to redesign the project around operational decisions, not around the model alone. In practice, that means starting with a short list of business questions the twin must answer repeatedly. For example: which machine is likely to constrain output in the next shift, what maintenance action prevents the highest downtime exposure this week, or how will a change in product mix affect throughput and energy use over the next 72 hours?

Once those questions are defined, project leaders can reverse-engineer the delivery model. That includes required data sources, acceptable latency thresholds, owners for exception handling, and review routines by user group. In many cases, this approach reduces scope creep because features that do not improve decision quality can be postponed or removed.

It is also important to separate model completeness from deployment readiness. A twin does not need to represent every asset and variable before it can deliver value. Many successful programs begin with one production area, 3 to 5 KPIs, and 2 high-frequency use cases, then expand once user trust and integration stability are proven.

A practical recovery framework for stalled digital twin manufacturing initiatives

For managers facing a stalled deployment, recovery usually depends on disciplined simplification rather than adding more layers. The aim is to re-establish a direct path between data, model, action, and measured result.

- Define 2 to 3 operational use cases with named owners, decision timing, and expected KPI impact.

- Set data freshness targets by use case, such as 5 minutes, 1 hour, or daily, instead of using a single generic standard.

- Create a governance map covering engineering, IT, operations, and finance, with escalation timing for unresolved issues.

- Track adoption with simple indicators like weekly active users, decision events supported, and number of manual workarounds removed.

- Review scale readiness every 30 to 60 days before expanding to another line, cell, or facility.

This framework supports trend-aware execution because it reflects the current market reality: industrial digital programs must prove repeatable value quickly, under mixed infrastructure conditions, and without depending on permanent specialist intervention.

What to monitor over the next 12 months

Looking ahead, project leaders should pay close attention to three signals. First, whether vendors and integration partners can support open, maintainable architectures across heterogeneous systems. Second, whether internal teams can assign business ownership beyond the pilot phase. Third, whether digital twin manufacturing is being connected to broader plant priorities such as resilience, sustainability, and multi-site standardization rather than remaining isolated as a digital showcase.

Programs that align with these signals are more likely to move beyond modeling and toward durable operating value. Those that do not may still produce technically impressive outputs, but they will struggle to influence the daily choices that determine throughput, cost, and responsiveness.

For project managers and engineering leaders, the central judgment is no longer whether to adopt digital twin manufacturing. It is how to build a version of it that can survive real plant complexity, earn user trust, and deliver measurable outcomes within a realistic implementation horizon.

Why work with TradeNexus Pro on your next evaluation

TradeNexus Pro supports decision-makers who need more than general commentary on digital twin manufacturing. We focus on the industrial realities that affect project outcomes: integration readiness, supplier evaluation, deployment sequencing, operational fit, and the business logic behind technology adoption across advanced manufacturing and adjacent sectors.

If your team is assessing why a digital twin manufacturing initiative has slowed after modeling, we can help structure the next conversation around practical checkpoints. That may include use-case prioritization, data dependency mapping, deployment timeline expectations, platform comparison criteria, and the operational questions that should be validated before budget is expanded.

Contact us if you want support in reviewing solution scope, integration assumptions, supplier positioning, rollout phases, or ROI framing for plant-level deployment. We can help you clarify decision parameters, compare implementation paths, discuss delivery windows, and identify where customization or additional validation may be required before the next project milestone.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.